Most teams overestimate their audit readiness because they confuse document existence with control effectiveness. A policy is not evidence that the process runs. A risk register is not evidence that risks are reassessed after change. An inventory is not evidence that shadow AI or third-party tools are actually being captured.

That distinction matters because ISO 42001 sits on management-system logic. Internal audit is supposed to determine whether the management system conforms to requirements, is effectively implemented, and is maintained. In plain language: are the controls designed properly, are they running, and can the organization prove it?

What internal auditors are actually testing

Internal auditors are not there to admire your framework deck. They are testing six things:

- Scope and boundary: which AI systems, business units, and suppliers are inside the AIMS, and whether that boundary makes sense.

- Risk methodology: whether AI risk criteria exist, are defined, and are used consistently.

- Operational control execution: whether testing, human oversight, monitoring, incident handling, and change triggers actually happen.

- Evidence quality: whether records are complete, dated, attributable, and retained in a way an auditor can trace.

- Governance cadence: whether management review, corrective action, and audit follow-up are real rather than ceremonial.

- Proportionality: whether the depth of governance matches the risk level of the AI use case.

If one of those six fails, the rest usually start collapsing behind it.

Operational reality: internal audit should not be a copy of the certification audit. It should be harsher. Certification auditors sample. Internal audit can go directly after the weak areas the organization already suspects are fragile.

The core audit questions AI governance teams should expect

These are the questions that cut through theatre fast. Not every audit needs all of them, but a mature internal audit programme should use most of this list.

| Audit area | Question | What good evidence looks like |

|---|---|---|

| Scope | How was the AIMS scope defined, and what AI systems were excluded? | Approved scope statement, rationale, inventory cross-check, exclusion log. |

| Inventory | How do you know the AI inventory is complete and updated after change? | Inventory owner, review cadence, onboarding trigger, procurement/API checks, update records. |

| Risk assessment | What criteria determine risk level, and where is residual risk accepted? | Methodology, scored assessments, approver names, residual-risk decisions. |

| Human oversight | Which use cases require intervention, approval, or escalation? | Threshold matrix, workflow rules, exception log, shutdown authority. |

| Validation | How is the AI system verified before production and after significant change? | Test plans, acceptance criteria, validation reports, sign-off records. |

| Monitoring | What indicators are monitored in production, by whom, and how often? | Dashboards, threshold alerts, review tickets, trend reports. |

| Incidents | What qualifies as an AI incident and what evidence is retained? | IR playbook, incident log, root-cause analysis, CAPA records. |

| Suppliers | How are external AI tools and providers evaluated and re-reviewed? | Due-diligence records, contractual clauses, review schedule, supplier risk register. |

| Competence | How do you know the responsible roles are competent for AI governance tasks? | Role matrix, training completion, assigned owners, competency criteria. |

| Management review | What did management actually review in the last cycle and what changed? | Agenda, metrics, decisions, actions, deadlines, accountability. |

Notice the pattern. Every useful question has a design side and an evidence side. Auditors should ask both. Otherwise they get trapped in policy language that sounds mature but proves nothing.

Question the operating model, not just the documents

Weak audits ask, "Do you have an AI policy?" Better audits ask, "Show me the last decision where the policy changed an operational outcome." That is the right standard.

- For inventory: sample a new tool that was introduced recently and verify it appeared in the inventory on time.

- For risk assessment: take one medium-risk and one high-risk system and compare whether the control depth changed materially.

- For change management: pick a model update, prompt change, tool integration, or memory-function change and test whether reassessment happened.

- For human oversight: inspect a case where the system paused, escalated, or was overridden. If there are no examples, the control may only exist on paper.

- For suppliers: test whether one critical AI vendor was re-reviewed when scope or functionality changed.

This is where AI governance audits should borrow from process auditing, not just control checklists. You are following the work through the system, from trigger to decision to evidence.

Sampling and evidence techniques that expose weak controls

Internal audit goes soft when it samples only the cleanest material. Do the opposite.

- Sample recent changes. Old artefacts are usually curated. Recent changes show whether the process really runs under time pressure.

- Sample edge cases. Agents with tool access, customer-facing models, HR use cases, and third-party GenAI services reveal governance weakness quickly.

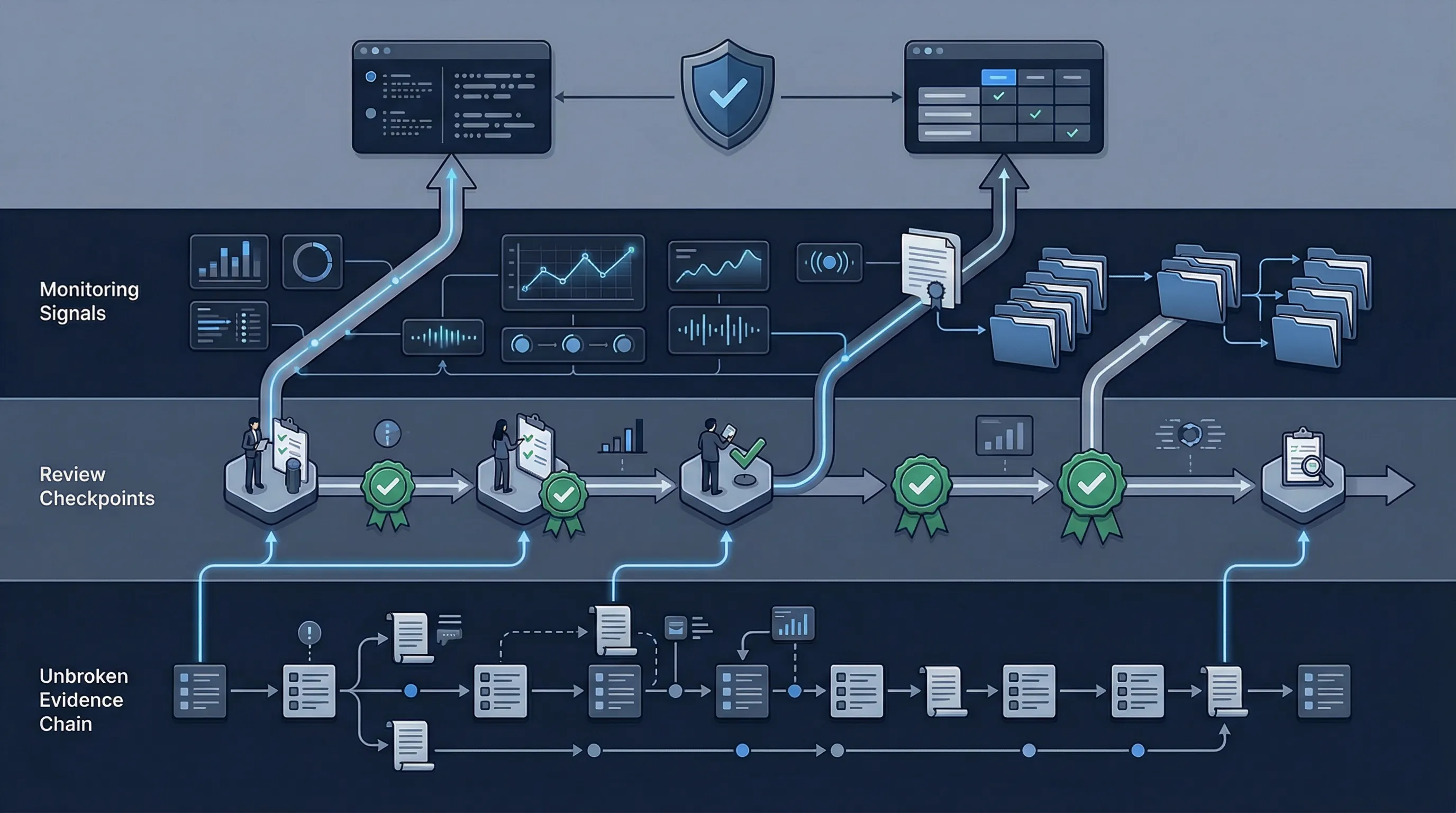

- Trace evidence end to end. Start with an inventory item, then pull the risk assessment, approval, testing, monitoring record, and any incident or exception history.

- Challenge timestamps and ownership. Missing dates, unsigned records, and stale review fields are common failure points.

- Look for contradiction. If the policy says quarterly review but dashboards have not been checked in six months, the record set is lying to you.

One useful rule: if a control produces no natural record, it is usually not a real control yet. Mature governance leaves operational residue: tickets, approvals, logs, meeting actions, exceptions, and follow-up decisions.

The failures that show up first

- Inventory incompleteness. The organization governs the systems it remembers, not the systems it actually runs.

- Undefined reassessment triggers. Nobody can explain when a model, agent, or supplier must be reviewed again after change.

- Human oversight with no thresholds. The policy says there is oversight; the workflow cannot show where it activates.

- Incident handling borrowed from cyber only. The team can respond to outages but not to harmful model behavior, unsafe agent actions, or corrupted outputs.

- Management review with no teeth. Slides get presented, but no decisions, no deadlines, and no corrective actions follow.

Those are not minor defects. They indicate the AIMS is under-governed at the system level.

The practical goal is not to pass a meeting. It is to build an internal audit routine that finds weak governance before a customer, regulator, certification body, or executive committee finds it for you.