Inventory baseline

Inventory baselineAI System Inventory Template

11-column Excel schema for cataloging AI systems, owners, use cases, vendors, data categories, and evidence gaps.

Choose the free artifact that matches your current governance problem: AI inventory, policy, vendor risk, regulatory evidence, agentic AI controls, OpenClaw security, or board reporting. No login or email gate.

The downloads are not a random file shelf. Each one creates a specific evidence record that can later be expanded inside ACT-1 Starter, ACT-2 Professional, or an implementation sprint.

Start with inventory and shadow AI discovery before writing policies or risk reports.

Go to discovery downloads →Use policy and approval records to define permitted AI use, prohibited use, and escalation points.

Go to policy downloads →Screen copilots, agents, embedded AI, MCP servers, and AI suppliers before approval.

Go to vendor downloads →Prepare impact, consumer-rights, annual review, and deployer obligation records.

Go to evidence downloads →Track autonomy failures, shutdown decisions, skill approvals, incidents, and runtime containment.

Go to agentic downloads →Convert inventory, risk posture, vendor exposure, and agentic risk into management-facing decisions.

Go to reporting downloads →Use the free downloads in this order if your team is starting from a weak AI governance baseline. The sequence prevents a common failure: writing policies before you know which AI systems, vendors, agents, and business owners exist.

Build the AI system inventory and shadow AI register first.

Use discovery artifacts →Document acceptable use, vendor intake, and skill approval decisions.

Use policy artifacts →Prepare FRIA-lite, Colorado deployer, and review-readiness records.

Use evidence artifacts →Track incidents, shutdown decisions, rollback evidence, and board reporting.

Use reporting artifacts →Pick the artifact closest to the decision you need to document this week. Each card shows the operational use case, format, and natural paid-product route.

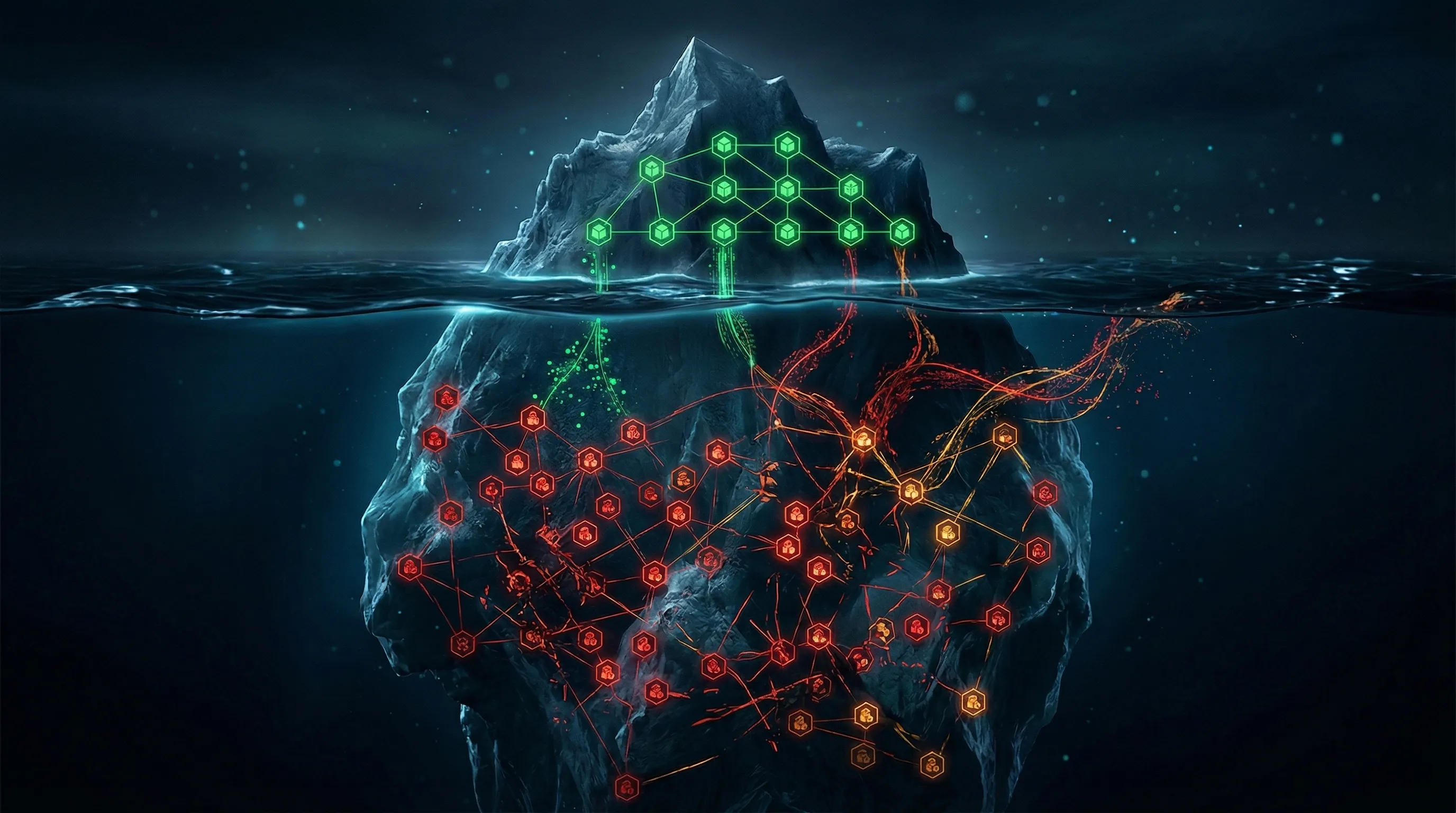

Use these when no reliable AI inventory exists or when unmanaged browser tools, plugins, agents, or vendor AI features may already be in use.

Inventory baseline

Inventory baseline11-column Excel schema for cataloging AI systems, owners, use cases, vendors, data categories, and evidence gaps.

Shadow AI

Shadow AIFind unmanaged AI use across teams, browser tools, vendors, plugins, agents, and informal workflows.

OpenClaw inventory

OpenClaw inventoryRegister known and suspected OpenClaw deployments with environment classification, data sensitivity, ownership, and status.

Use these when employees, developers, or business teams need clear boundaries before broader control mapping begins.

Policy starter

Policy starterFive-section Word template covering scope, acceptable uses, prohibited uses, data handling, reporting, and enforcement.

Skill approval

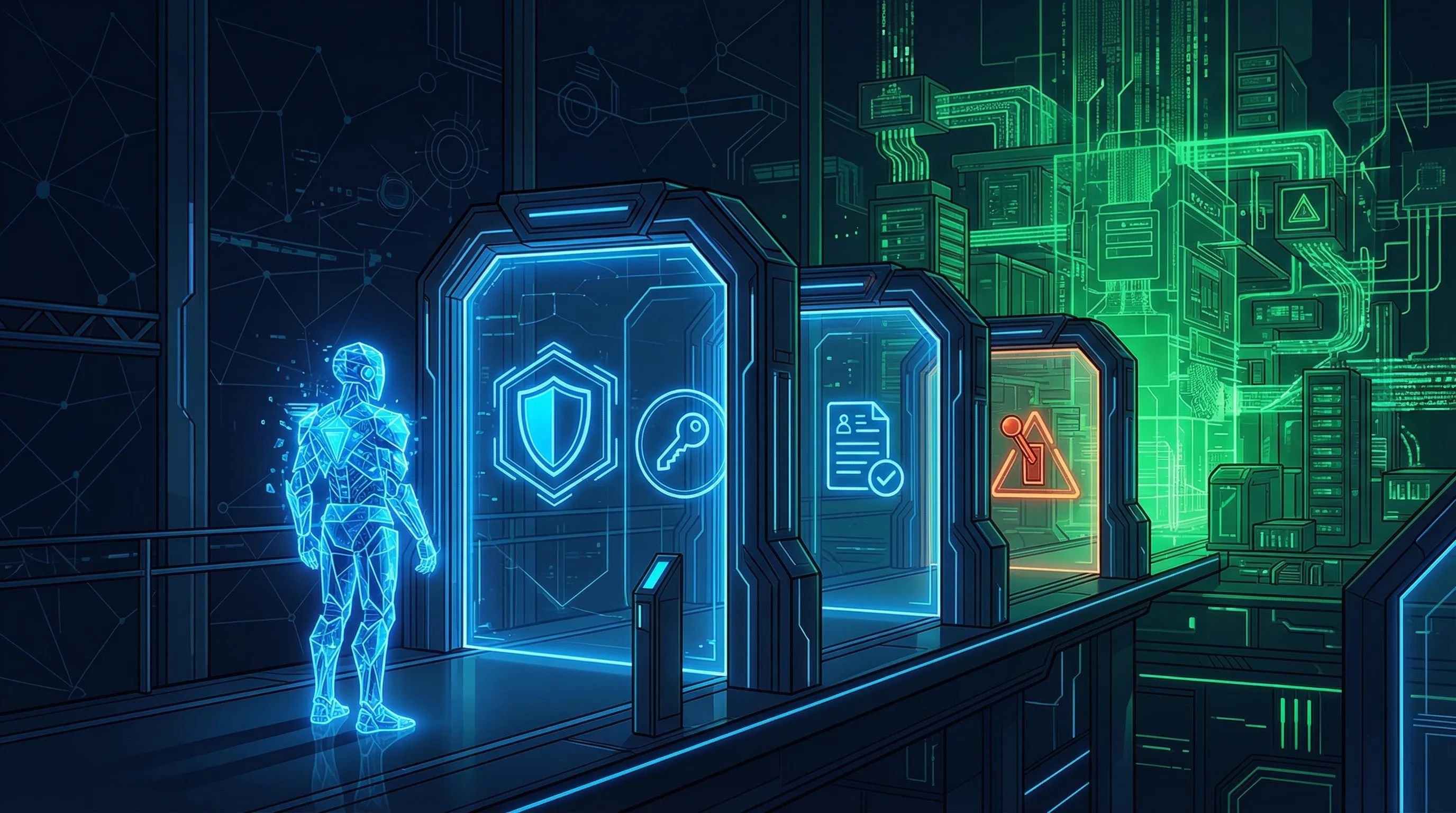

Skill approvalRecord formal approval decisions for skills, connectors, and MCP integrations using approval, sandbox, hold, or reject classifications.

Vendor risk

Vendor riskScreen AI vendors, copilots, agents, and embedded AI suppliers before approval, procurement, or renewal sign-off.

Use these when the next problem is impact evidence, annual review readiness, consumer-rights handling, or deployer obligation tracking.

Impact evidence

Impact evidenceDocument AI impact, consumer-rights handling, annual-review readiness, and evidence records for Colorado-style deployer obligations.

Colorado deployer

Colorado deployerTrack high-risk determination, deployer obligations, annual-review readiness, and status records for Colorado AI Act preparation.

Security readiness

Security readinessTrack containment actions across control areas for OpenClaw deployments and route technical gaps into governance evidence.

Use these when autonomous or semi-autonomous agents can call tools, retain memory, trigger workflows, connect to MCP servers, or require shutdown evidence.

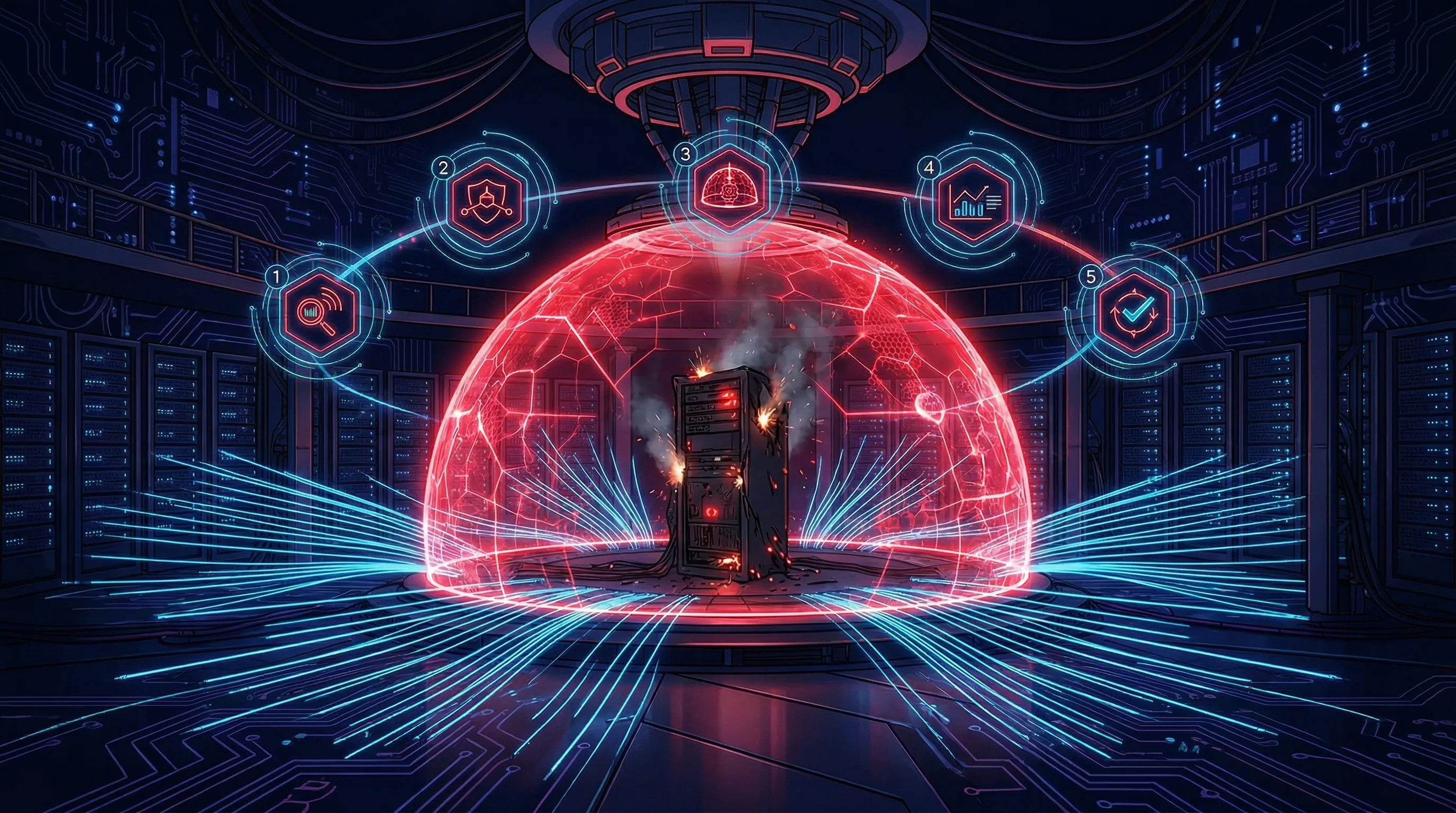

Agentic incident

Agentic incidentLog agent incidents, classify autonomy failures, document kill-switch decisions, and retain rollback evidence.

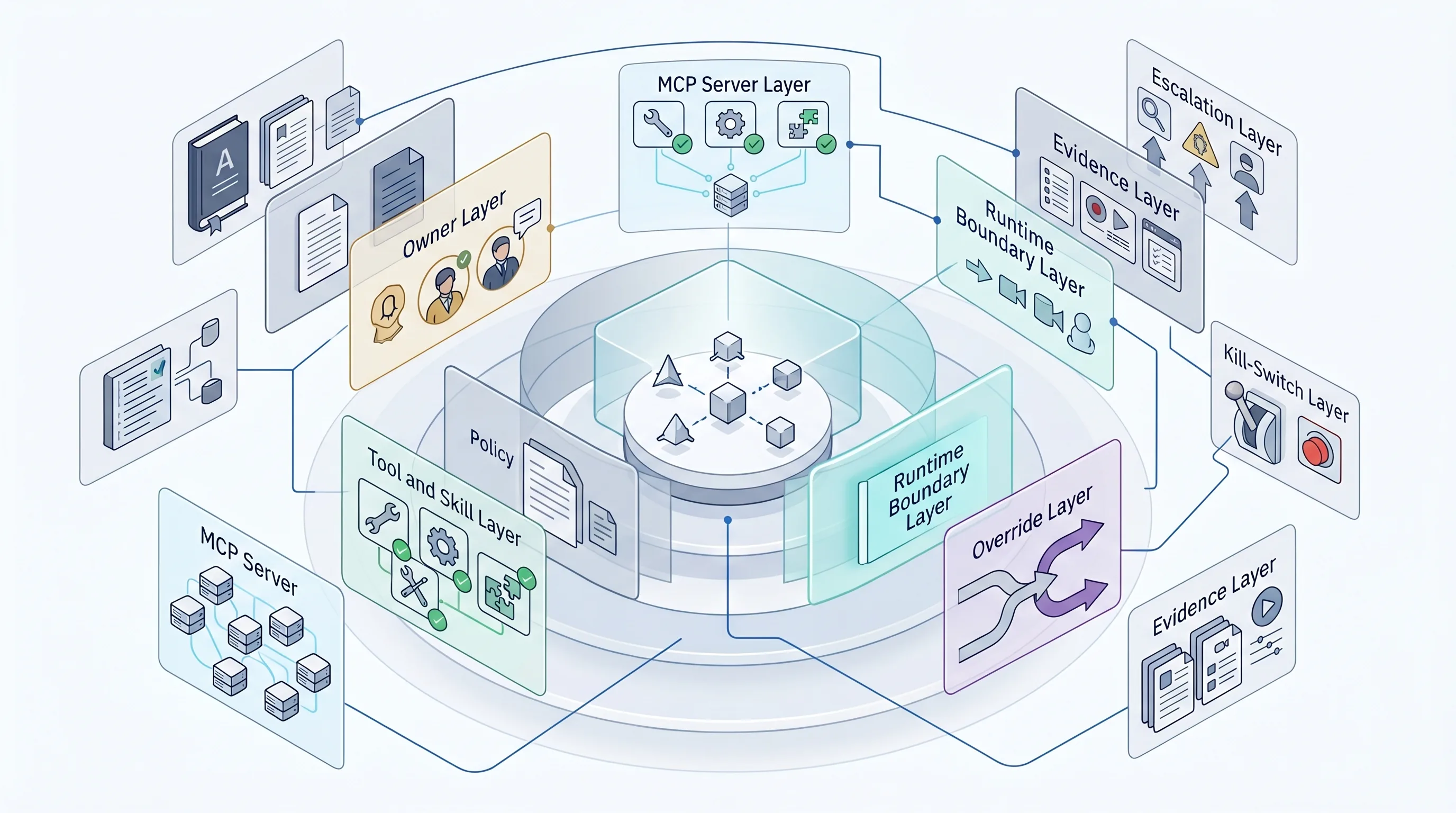

Operating model

Operating modelDefine governance layers for AI agents, MCP servers, tools, skills, override, kill-switches, evidence, and escalation.

OpenClaw playbook

OpenClaw playbookTrack OpenClaw discovery, agentic risk register entries, OWASP mapping, and AI policy addendum actions.

Use these when the audience is management, board, legal, risk, compliance, or security leadership rather than the individual system owner.

Board reporting

Board reportingBrief leadership on AI inventory, risk posture, regulatory exposure, agentic AI risk, and 90-day governance decisions.

Incident reference

Incident referenceUse a five-step field guide for containment, evidence preservation, impact assessment, escalation, and remediation.

Next step

Next stepUse ACT-2 when free artifacts need to become a connected implementation evidence pack across governance, risk, vendor, agentic AI, and reporting workflows.

A standalone template helps you start. It does not create ownership discipline, cross-framework mapping, recurring review, vendor evidence, board cadence, or agentic AI escalation by itself.

No. The public downloads are intended to be available without login or email capture.

Start with the AI System Inventory Template if the organization has not catalogued AI use. Start with vendor due diligence if third-party AI tools are the immediate approval risk. Start with FRIA Lite or the Colorado toolkit when consumer-impact evidence is the current concern.

Move to ACT-1 when the team needs a structured starter control set. Move to ACT-2 when the team needs cross-framework evidence mapping, board reporting, vendor evidence, agentic AI governance, and reusable implementation workbooks.

No. They support evidence collection and governance implementation. They do not replace legal advice, audit judgment, formal conformity assessment, certification decisions, or regulatory counsel.

The public downloads can be used as starter references. Paid ACT product licensing, reuse rights, and client-delivery scope should be reviewed on the relevant product and terms pages before commercial reuse.

Some standalone files are expected to resolve from the live Hostinger deployment. Their references are intentionally preserved in the HTML package.

Source and review note: This page was last reviewed on 6 May 2026 against the current Move78 public site baseline and relevant official or authoritative sources where laws, standards, frameworks, cybersecurity controls, product scope, pricing, support policy, or implementation guidance are discussed. It provides operational implementation guidance and product information only; it is not legal advice, tax advice, audit assurance, certification assurance, conformity-assessment advice, buyer-approval assurance, or security assurance. Validate legal, regulatory, contractual, tax, audit, and security decisions with qualified professionals.

Move78 free downloads are intentionally useful but limited. Each resource should help you inspect an implementation area, then route you to ACT-1, ACT-2, M78Armor, or the implementation sprint when the evidence gap is broader than one file.

| Free resource type | What it helps you inspect | What it does not include | Upgrade route |

|---|---|---|---|

| Starter inventory and acceptable use files | AI use cases, ownership, policy baseline, and early governance gaps. | Connected cross-framework evidence, board reporting, vendor workflows, or implementation sequencing. | ACT-1 Starter |

| Vendor diligence and board reporting previews | Buyer-facing evidence questions and executive reporting structure. | Full ACT-2 module logic, evidence map, and implementation pack depth. | ACT-2 Professional |

| Agentic AI, incident, and boundary artifacts | Agent owners, allowed actions, shutdown path, escalation, and incident records. | Runtime hardening, environment-specific configuration, or full ACT-2 governance workflow. | Boundary Register Lite → ACT-2 |

| FRIA, Colorado, and evidence-readiness files | Impact-assessment prompts, consumer-rights evidence, and compliance-adjacent records. | Legal review, regulatory determinations, or sector-specific advice. | Compare ACT tiers |

New public previews are available for buyers who want to inspect the ACT product structure before purchase.

Inspect ACT sample packUse these pages when a reader needs to move from general AI governance interest into buyer evidence, board-facing records, or implementation support.

Inventory, risk, vendor, oversight, agent boundary, escalation, and decision-record evidence for board or risk-committee review.

Review board evidence →Bounded support route for owners, evidence maps, decisions, and a 30-day implementation backlog.

Review sprint route →Source hierarchy, claim-control rules, conservative wording, and legal/security boundaries for Move78 content.

Review methodology →