RAG / Vector Trust & Data Disclosure Check

Assess in under 5 minutes whether the current RAG and vector pipeline could leak sensitive information or trust poisoned content.

This screen is for teams using knowledge bases, retrieval-enabled copilots, or internal assistants who need a governance answer before broader rollout or higher-sensitivity data access.

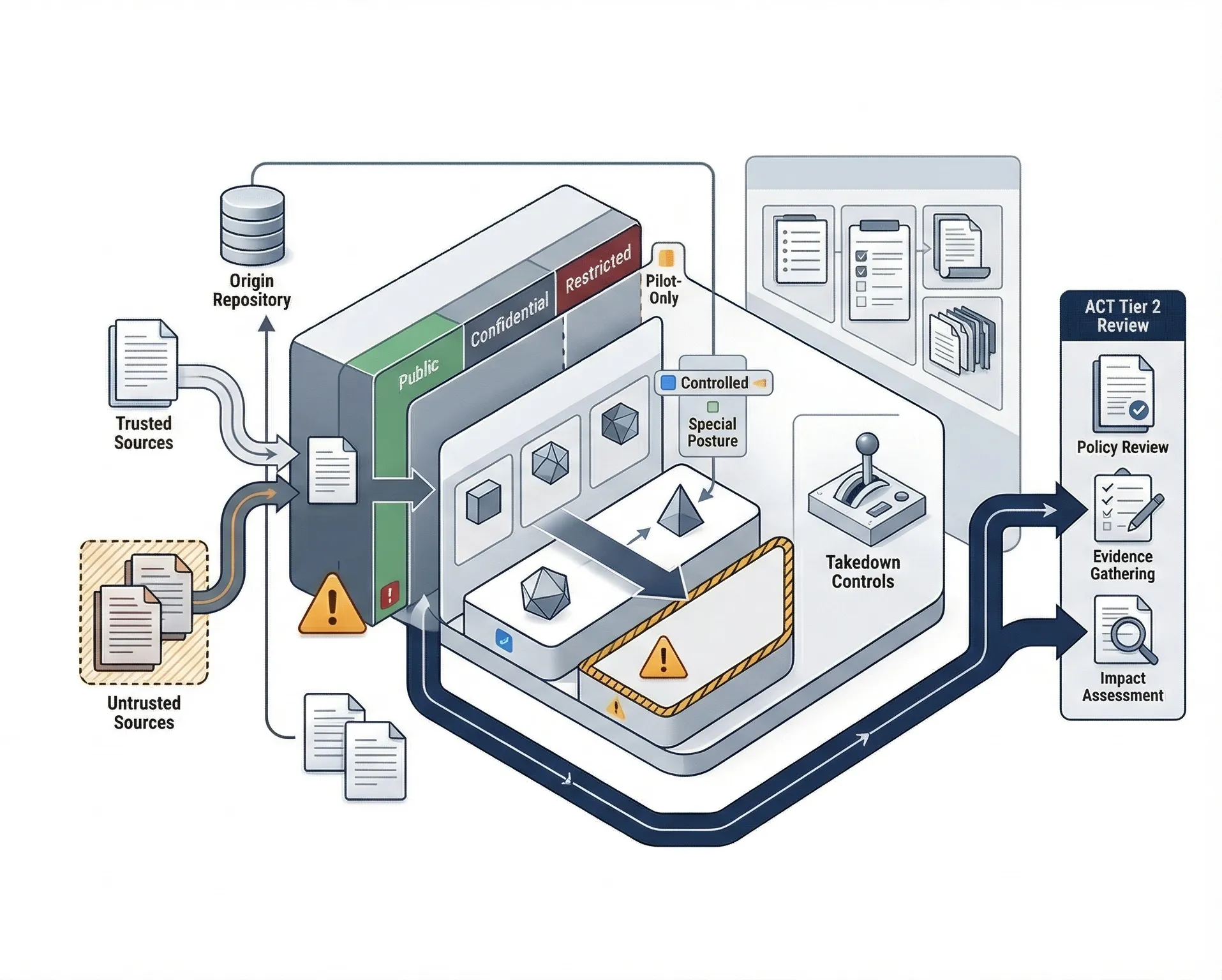

- Checks source trust, ingestion review, data-classification boundaries, leakage control, takedown readiness, and answer traceability.

- Flags whether the retrieval posture is controlled, constrained, materially risky, or not governable for enterprise use.

- Routes to AI Controls Professional when the missing layer is data-handling policy, incident readiness, evidence, and formal impact review.