Why bounded autonomy matters

Here's the thing. Most agentic AI failures won't start with some cinematic rogue model event. They'll start with a team quietly giving an agent one permission too many and no one writing down where human approval still applies.

That's why I prefer the phrase bounded autonomy. It forces a more useful question than "do we have oversight?" The useful question is: which actions may proceed without a person, which actions pause for sign-off, and which actions should never be delegated at all?

In practice, this looks less like abstract ethics and more like change control. A mature enterprise already knows how to gate production changes, financial approvals, privileged access, and customer communications. Agentic AI should be governed the same way. If you wouldn't let a junior operator reset IAM roles or send legal notices without review, don't let an agent do it either.

Decision rule: treat autonomy like production access. The stronger the blast radius, the tighter the approval threshold.

The five threshold categories that matter first

You do not need a perfect maturity model before deployment. You do need explicit thresholds in five categories. If even one of these is missing, the control model is too loose.

| Threshold | Autonomous allowed? | Human sign-off trigger | Never delegate without redesign |

|---|---|---|---|

| Financial impact | Low-value internal tasks only | Any spend, invoice, refund, or contract amendment | High-value payments or treasury actions |

| Identity and access | Ticket triage, evidence collection | Provisioning, permission changes, token rotation | Admin role grants or emergency access |

| External communication | Drafting internal notes | Customer emails, regulator submissions, public posts | Legal notices, disciplinary communications |

| Code and infrastructure | Static analysis, sandbox tests | Production merges, pipeline changes, deployment approvals | Unreviewed runtime changes in live systems |

| People and rights impact | Scheduling and administrative support | Candidate screening, employee recommendations, case prioritization | Any consequential decision without documented review |

Notice the pattern. The threshold is not set by whether the model is "smart enough." It is set by consequence, reversibility, and evidence burden. That's a harder line. It should be.

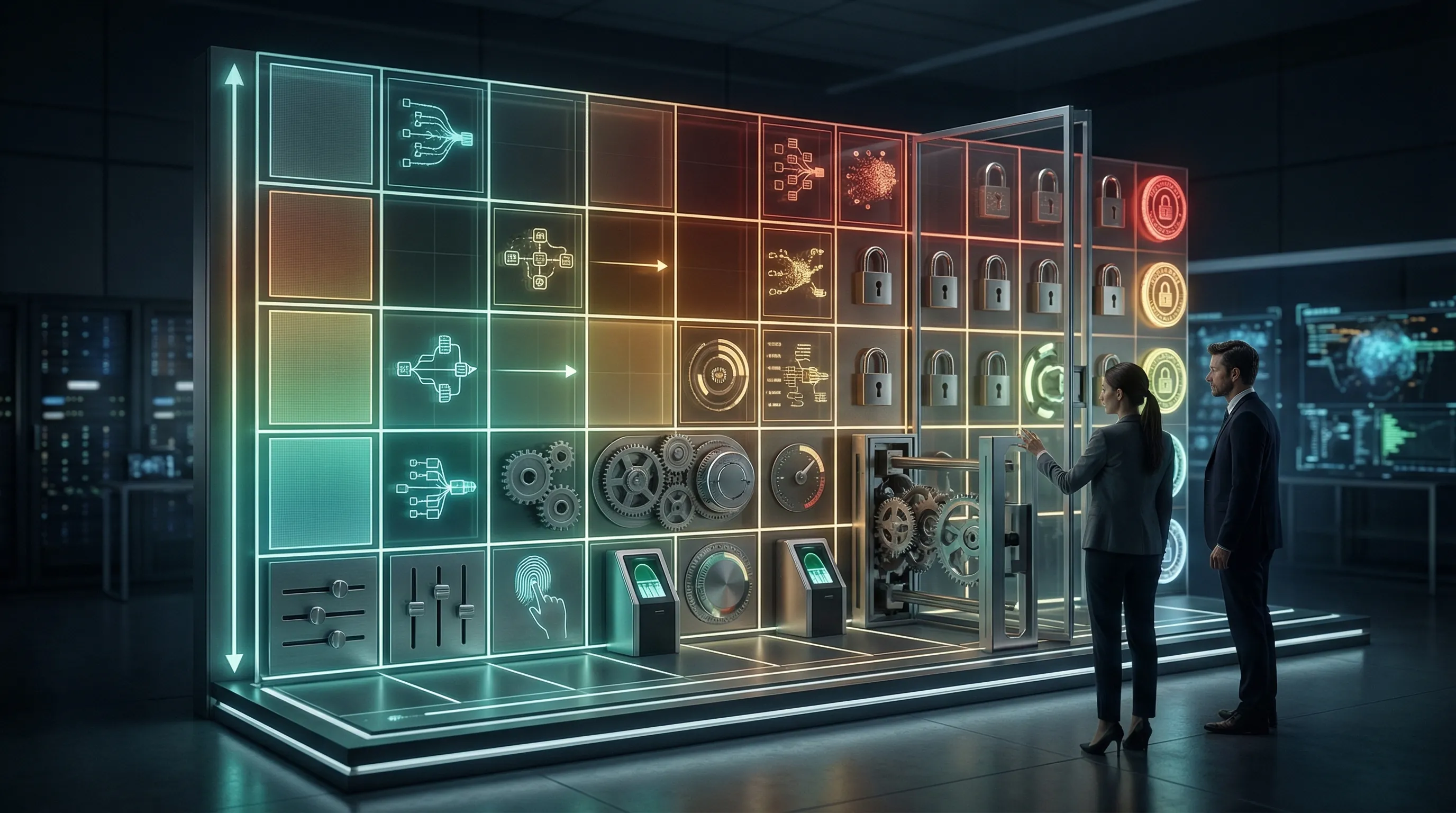

Build a three-zone approval matrix

The cleanest implementation model is a three-zone matrix. Green zone actions run automatically. Amber zone actions pause for approval. Red zone actions are prohibited unless the workflow is redesigned.

- Green zone: low-impact, reversible, logged actions inside a known environment. Think summarization, internal routing, ticket enrichment, or evidence collection.

- Amber zone: actions with moderate business impact, external visibility, or sensitive data access. These need named approvers and timeout logic.

- Red zone: privileged access changes, contractual commitments, people decisions, unrestricted code execution, or anything that can create legal or safety exposure quickly.

Where teams get sloppy is the amber zone. They either over-approve everything and kill the value case, or they quietly let amber tasks drift into green because the pilot seemed harmless. That drift is where audit trouble starts.

Set numeric triggers, not vague promises

"Human review for critical actions" is not a control. It is a slogan. Good control language uses numbers and named events. For example: any payment above USD 500, any outbound message to more than 100 recipients, any permission change touching a production tenant, any workflow with persistent memory touching personal data. Now your engineering team can encode the threshold instead of guessing it.

What evidence proves your override model exists

If you're serious about governance, the threshold model needs evidence. Not just a slide. Evidence.

- Approval matrix: action types, autonomy zone, approver role, timeout, fallback, and log source.

- Use-case classification memo: what the agent does, who owns it, and which thresholds apply.

- Configuration artefacts: rules in code, workflow tooling, orchestration layer, or policy engine.

- Exception register: temporary overrides, justification, expiry date, and sign-off.

- Test cases: proof that amber actions stop and red actions fail closed.

This is where the analogy to incident response helps. You don't prove an escalation path by saying one exists. You prove it with the runbook, the pager list, the timestamped decision, and the post-incident record. Human override thresholds work the same way.

Three design mistakes I keep seeing

- Approval by role title only. "Manager approval required" sounds fine until nobody can tell which manager owns the workflow. Use named control owners.

- One threshold for every tool. Email, code execution, finance systems, and CRM updates do not deserve the same autonomy model. Tool sensitivity changes the threshold.

- No fail-closed path. If the approval service goes down or the policy engine breaks, the safe outcome is not "just continue." It is stop, log, and alert.

Out of scope by design: this article is not trying to solve model evaluation, fairness testing, or vendor benchmarking. Those matter. They are separate control tracks. This page is about action thresholds, because that's where agentic systems create immediate operational exposure.