What the EU AI Act Is

The EU AI Act is Regulation (EU) 2024/1689. It entered into force on 1 August 2024, but it does not switch on all at once. It applies in stages. That matters because most operational mistakes happen when companies treat the Act as either fully future-tense or fully live in every respect. Neither view is accurate. The binding legal text is on EUR-Lex, and the Commission maintains a practical overview on its AI Act policy page.

Structurally, the Act is a single horizontal regulation that sets Union-wide rules for the development, placing on the market, putting into service, and use of AI systems in the EU. It is built around a risk-based model, operator roles, and lifecycle obligations. That gives it a very different compliance shape from country regimes built as rule stacks or sector overlays.

The easiest way to read it commercially is this: the Act is trying to create one common market framework for AI while preserving a high level of protection for health, safety, and fundamental rights. For companies, that translates into three real questions. What role are you playing? What risk category are your systems in? What documented evidence can you produce if challenged?

That last point is where most "EU AI Act explainers" fail. They summarize the law but do not tell a provider, deployer, or procurement owner what to build. The useful version of the Act is not a legal abstract. It is an operating model: classification, governance, documentation, monitoring, and review.

Official source links: Regulation (EU) 2024/1689 on EUR-Lex · European Commission AI Act overview · First rules applicable notice

Need the obligation map, not just the summary? EU AI Compass gives users a dedicated EU AI Act learning and screening environment, including timeline guidance, free tools, and access to the EU AI Act Compliance Toolkit.

Open EU AI Compass to use the tools and solutions built specifically for this regulation.

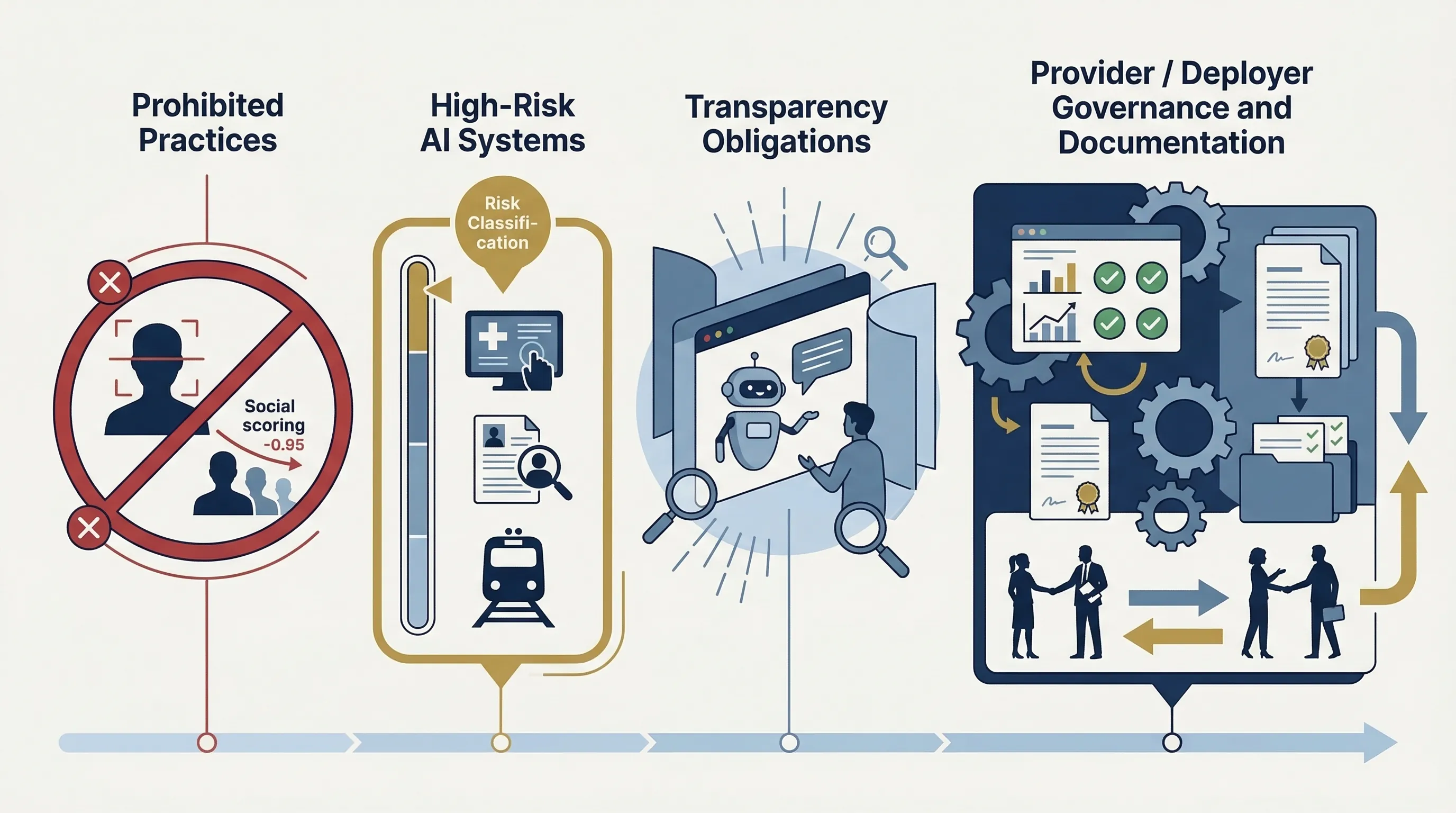

The Four Risk Layers

The EU AI Act makes more sense once you stop thinking in slogans and start thinking in buckets. The Act does not say "AI is regulated" in one flat way. It splits the landscape into four practical layers: prohibited practices, high-risk systems, certain transparency-governed systems, and the rest.

| Risk category | What it means | Typical examples | Practical implication |

|---|---|---|---|

| Prohibited | Uses considered unacceptable under the Act | Manipulative, exploitative, certain social-scoring and biometric uses | Do not design, market, deploy, or use them in the prohibited form |

| High-risk | Systems that trigger the Act's heaviest lifecycle obligations | AI in employment, education, essential services, critical infrastructure, regulated products | Requires structured governance, documentation, monitoring, and operator controls |

| Transparency-specific | Systems with defined disclosure obligations | Chat interfaces, emotion recognition, certain synthetic content contexts | Requires user-facing transparency and related controls |

| Minimal risk | Most other systems not pulled into heavier obligations | Lower-impact assistive or productivity tooling | No full high-risk regime, but governance is still commercially wise |

In practice, most organizations should not start by assuming their systems are high-risk. That creates noise. They should start with role classification and prohibited-practices screening, then test whether any system falls into the high-risk architecture or transparency-specific layer. That sequence is faster and less error-prone.

Prohibited AI Practices

This is the first screening layer because it eliminates certain use cases before you waste time building a broader compliance program around them. The prohibited-practices layer is commercially important precisely because it is simpler than the rest of the Act. Either the use case falls into a prohibited pattern or it does not.

The Commission published guidelines on prohibited AI practices in February 2025 to support the first AI Act rules becoming applicable. That does not replace the law, and the guidelines are non-binding. But they matter because they show how the Commission expects those prohibitions to be interpreted in practice. See the Commission's first rules applicable notice and linked guidance materials.

| Prohibited practice type | Trigger | What companies must not do |

|---|---|---|

| Manipulative or deceptive use causing harm | AI designed or deployed to materially distort behavior in harmful ways | Do not engineer or deploy the system in that prohibited form |

| Exploitative use tied to vulnerability | Use that exploits age, disability, or other vulnerability categories in harmful ways | Do not operationalize the use case as a commercial or public workflow |

| Social scoring and similar public-authority misuse | Use of AI to evaluate or classify persons in prohibited social-scoring ways | Do not treat these profiles as valid governance or product features |

| Certain biometric and law-enforcement uses | Specific practices explicitly restricted or prohibited by the Act | Escalate to legal review immediately rather than assuming availability |

Bluntly: if your product team says "we'll deal with the legal layer later," this is the place that kills that logic. Prohibited-practice analysis belongs at concept stage, not launch stage.

High-Risk AI Systems

High-risk AI is where the Act gets operationally serious. This is the layer that drives structured documentation, governance, data and lifecycle controls, monitoring, and post-market logic. The exact legal triggers sit in the Act itself, but from a working perspective the question is whether the system is used in a context where errors or misuse can materially affect rights, safety, or access to important opportunities and services.

For SMEs, the trap is usually one of two extremes. Either they assume nothing they build is high-risk because they are "just a software company," or they assume everything with a model is high-risk and freeze. Both are wrong. The better method is use-case classification backed by evidence.

| High-risk obligation | Evidence artifact | Owner |

|---|---|---|

| Risk management and controls | Risk register, treatment plan, impact review, approval record | Governance owner + product owner |

| Technical and compliance documentation | System file, design record, test record, release evidence | Engineering + compliance |

| Human oversight and escalation | Oversight procedure, decision thresholds, intervention workflow | Operations + business owner |

| Post-market monitoring and incident handling | Monitoring SOP, issue log, corrective action record | Operations + support + legal |

The practical message is not "be afraid of high-risk AI." It is "stop shipping systems in consequential contexts without being able to explain how they are governed." That is what the Act is correcting.

Provider vs Deployer vs Other Operator Roles

Most compliance failures begin with role confusion. A provider is not a deployer. A deployer is not just a passive user. Importers, distributors, authorized representatives, and product integrators can also matter. If you get the role wrong, the control assignment collapses.

| Role | Core responsibilities | Common SME mistake |

|---|---|---|

| Provider | Design, development, placing on the market, technical documentation, system governance | Assuming the reseller or customer carries the main burden |

| Deployer | Use of the system in operations, human oversight, instructions adherence, operational controls | Assuming "we did not build it" removes compliance duties |

| Importer / distributor | Market-side checks and distribution-related obligations | Treating channel activity as legally invisible |

| Integrator / wrapper owner | May reshape system behavior through configuration, workflow design, and deployment context | Blaming the base model vendor for obligations triggered by the wrapped service |

This matters for procurement too. Buying a model or API does not eliminate your obligations if you control the deployment context, output handling, user interface, or decision workflow. That is one of the most expensive misconceptions in the market.

Common failure pattern: teams classify themselves as "just deployers" even though they materially modify workflow logic, configure system behavior, or package the service under their own brand. That position often collapses under scrutiny.

EU AI Act Timeline and Phased Application

The timeline is where bad summaries create real risk. The Act entered into force on 1 August 2024, but obligations phase in. The first rules became applicable on 2 February 2025. General-purpose AI model obligations began applying on 2 August 2025. Most high-risk AI obligations and the main enforcement layer hit on 2 August 2026. Additional product-linked timelines extend further. The cleanest official summary is the Commission's AI Act implementation notice, backed by the legal text on EUR-Lex.

| Milestone | Date | What becomes relevant |

|---|---|---|

| Entry into force | 1 Aug 2024 | The Act becomes law; phased application begins from this point |

| First rules apply | 2 Feb 2025 | Prohibited practices rules apply; AI literacy obligations also begin |

| GPAI model obligations | 2 Aug 2025 | General-purpose AI model obligations begin to matter operationally |

| Main high-risk phase | 2 Aug 2026 | Most high-risk obligations become the priority deadline for companies |

That is why the August 2026 deadline is the real commercial urgency point for your ICP. It is close enough to force planning and late enough that many SMEs still have nothing operational in place.

Mapping the EU AI Act to ISO 42001 and NIST AI RMF

This is the practical bridge that makes your product commercially defensible. The EU AI Act tells you what the law expects. ISO 42001 gives you the management-system structure. NIST AI RMF gives you the risk-management method. You do not need three separate governance programs. You need one operating system with three lenses.

| EU AI Act requirement theme | ISO 42001 | NIST AI RMF | Evidence |

|---|---|---|---|

| Leadership, policy, accountability | Clauses 5.1-5.3 | GOVERN | Policy, RACI, committee charter, management review minutes |

| Risk and impact controls | Clauses 6.1, 8.2-8.4 | MAP / MEASURE / MANAGE | Risk register, impact assessments, treatment plans, monitoring thresholds |

| Human oversight and deployment controls | Annex A + operational controls | GOVERN / MANAGE | Oversight procedure, operating instructions, escalation rules |

| Documentation and monitoring | 7.5, 9.1-9.3, 10.2 | MEASURE / MANAGE | System file, monitoring logs, incident records, corrective actions |

If you already use ISO 42001 or NIST AI RMF, the Act becomes easier because you are not building governance from zero. If you use none of them, the Act becomes expensive because every obligation has to be invented from scratch inside the company.

EU AI Act vs China vs Colorado

The comparison is useful only if it stays structural. The EU AI Act is a single horizontal regulation. China uses a layered rule stack around service behavior and content governance. Colorado is a state law with a narrower but still meaningful high-risk and algorithmic-discrimination focus. One checklist cannot handle all three cleanly.

| Dimension | EU AI Act | China AI stack | Colorado AI Act |

|---|---|---|---|

| Legal architecture | Single omnibus regulation | Multiple binding instruments | State statute |

| Primary entry point | Role and risk classification | Service classification and content controls | High-risk decision systems and algorithmic discrimination |

| Operational emphasis | Risk-based lifecycle obligations | Provider responsibility, transparency, labeling, service governance | Impact assessment, notice, policy, governance, safe-harbor logic |

| Suggested first implementation step | Operator-role analysis | Rule-stack applicability analysis | High-risk system scoping |

The commercial takeaway is the same as with every multi-jurisdiction AI program: use one evidence system, not one entry logic. The evidence can overlap. The initial classification path cannot.

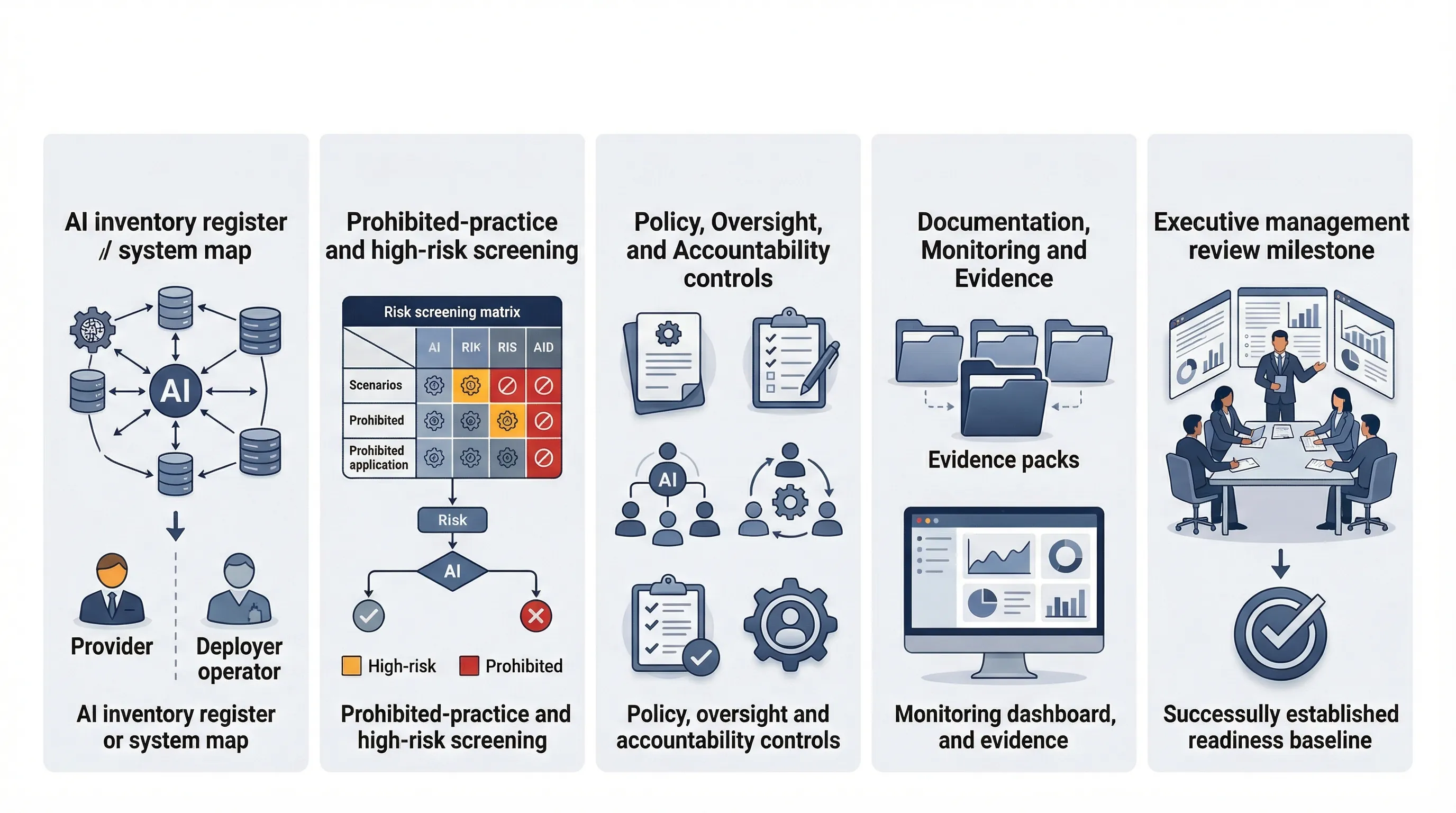

90-Day Readiness Sprint for SMEs

You do not need a theory project. You need a sprint that produces classification decisions, ownership, controls, and evidence before the August 2026 high-risk deadline arrives.

- Weeks 1-2: inventory all AI systems, classify operator roles, and run a prohibited-practices screen.

- Weeks 3-4: identify transparency-governed and potentially high-risk systems, and document why they fall in or out.

- Weeks 5-6: assign accountability, publish core policy language, and define oversight, monitoring, and escalation workflows.

- Weeks 7-9: build the evidence set: system files, risk records, impact assessments, operating instructions, and vendor governance controls.

- Weeks 10-12: run management review, test incident handling, validate evidence completeness, and approve the operating baseline.

If you miss that window, the problem is usually not legal complexity. It is ownership failure. No one owns classification. No one owns the evidence stack. No one owns vendor change control. Fix the operating model first.

Need the templates, not just the article? EU AI Act readiness becomes real when you can show policy, ownership, evidence, and role-based screening. EU AI Compass is the dedicated front door, and the toolkit store is where users can access the full solutions stack.

Go to EU AI Compass.