What MITRE ATLAS Is

MITRE ATLAS is the Adversarial Threat Landscape for Artificial-Intelligence Systems. MITRE describes it as a globally accessible, living knowledge base of adversary tactics and techniques against AI-enabled systems, based on real-world attack observations and realistic demonstrations from AI red teams and security groups. That wording matters because it places ATLAS squarely in the threat-informed defense category, not in regulation or management-system governance. See the official MITRE ATLAS site.

That makes ATLAS commercially useful for teams deploying LLMs, copilots, RAG systems, autonomous agents, or AI-assisted workflows. It provides a structured way to ask: how would an attacker actually target this kind of system, what techniques are likely, and what mitigations or detections should exist before the system goes live?

The main positioning risk is obvious. If you treat ATLAS like a compliance checklist, you will misuse it. ATLAS is about adversary behavior and defensive use. It belongs next to AI security architecture, red teaming, incident response, and control selection - not next to checkbox legal summaries.

Official source links: MITRE ATLAS · Center for Threat-Informed Defense Secure AI update · MITRE ATLAS OpenClaw Investigation

MITRE ATLAS vs MITRE ATT&CK

Security teams often ask whether ATLAS is just ATT&CK with AI branding. That is the wrong frame. ATT&CK is the broader cyber adversary behavior framework. ATLAS is AI-specific. It is concerned with tactics and techniques against AI-enabled systems and the malicious use of AI in cyber contexts.

| Dimension | MITRE ATLAS | MITRE ATT&CK |

|---|---|---|

| Primary scope | Adversary tactics and techniques against AI-enabled systems | Broader cyber adversary behavior knowledge base |

| Primary use | AI threat-informed defense, AI security review, adversary emulation for AI | Enterprise cyber defense, intrusion analysis, broader ATT&CK mapping |

| Commercial value | Hardens AI-enabled systems and agentic workflows | Hardens broader enterprise environments |

The practical takeaway is simple: ATT&CK remains useful, but AI-enabled systems create attack paths and behaviors that need more specific treatment. That is the niche ATLAS fills.

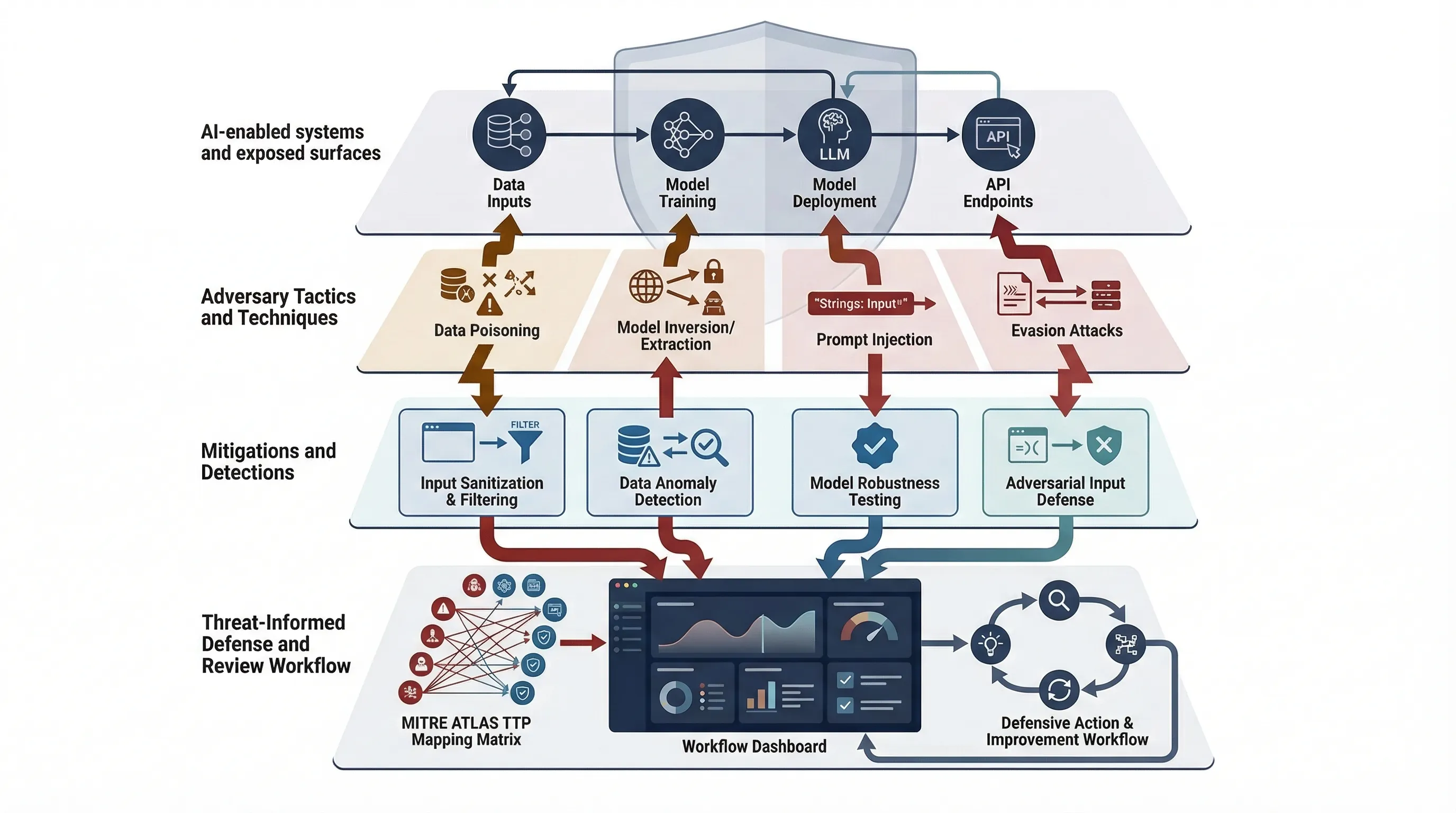

Tactics, Techniques, and Mitigations

ATLAS is useful because it gives defenders a language for attacker behavior rather than just a list of abstract risks. The structure is familiar to anyone who has worked with threat-informed defense: tactics are the attacker objectives, techniques are how those objectives are pursued, and mitigations are the defender responses that can reduce likelihood or impact.

| Layer | What it means | Defender use |

|---|---|---|

| Tactics | What the adversary is trying to achieve against an AI-enabled system | Helps scope defensive objectives and incident categories |

| Techniques | How the adversary tries to achieve that objective | Supports review, emulation, control design, and detections |

| Mitigations | Defensive measures that can reduce or interrupt attack paths | Supports hardening, monitoring, incident response, and assurance |

This is exactly why ATLAS is different from a general AI risk catalog. It is anchored in hostile behavior and defensive consequence, not only in governance language.

Common failure pattern: teams discuss AI risks in general terms but never translate them into attacker actions, system choke points, or mitigation coverage. That is how security programs stay theoretical.

Why Threat-Informed Defense Matters for AI-Enabled Systems

The Center for Threat-Informed Defense makes the current direction explicit. In its May 2025 Secure AI update, the Center says it applies a threat-informed approach to AI security that enables rapid exchange of new threat information, develops approaches to emulating those threats, and provides comprehensive and effective mitigation strategies. It also says the 2024 Secure AI program significantly expanded MITRE ATLAS and launched AI Incident Sharing, and that the 2025 program is focused on capturing evolving threats to AI-enabled systems and malicious use of AI in cyber. See the official Secure AI update.

| Security need | ATLAS contribution | Example output |

|---|---|---|

| Incident characterization | Provides a structured attack-language reference | Incident mapping and threat narrative |

| Mitigation prioritization | Connects likely techniques to defensive actions | AI security hardening backlog |

| Adversary emulation | Supports realistic exercise design | Red-team or purple-team scenarios |

| Detection engineering | Improves logging and signal design around likely AI abuse patterns | Detection requirements and alert logic |

| Security architecture review | Highlights where AI system surfaces may be abused | Architecture risk review and control map |

The key commercial point is that AI-enabled systems are not just "more software." They introduce new interactions among prompts, tools, models, retrieval sources, agents, and external actions. Threat-informed defense is the right mindset for that environment.

MITRE ATLAS vs NIST IR 8596 vs OWASP Agentic AI

These are complementary, not substitutes. ATLAS focuses on attacker tactics and techniques. NIST IR 8596 provides a cybersecurity profile structure through CSF 2.0 outcomes. OWASP's agentic AI work focuses on application and agent-specific risk patterns. Used together, they give you stronger AI security coverage than any one by itself.

| Dimension | MITRE ATLAS | NIST IR 8596 | OWASP Agentic AI |

|---|---|---|---|

| Primary role | Attacker TTPs and mitigations | Cybersecurity profile for AI via CSF 2.0 | Application and agent-specific risk lens |

| Primary use | Threat-informed defense, emulation, incident analysis | Cyber governance and control structure | Agent-specific application security review |

| Commercial output | Attack-path awareness and mitigation prioritization | Structured AI security baseline | App-layer design and review discipline |

Real Incident and Investigation Value

The February 2026 MITRE ATLAS OpenClaw Investigation is the most useful current proof point because it shows ATLAS being applied to actual AI-first incident patterns rather than living only as taxonomy. MITRE says it analyzed OpenClaw incidents, mapped associated security threats to ATLAS Tactics, Techniques, and Procedures, identified corresponding mitigations, and highlighted high-risk attack chains. The Center for Threat-Informed Defense blog says the analysis also identified chokepoint techniques that adversaries rely on. See the investigation PDF and the supporting CTID article.

That matters because it shows how ATLAS can be used in a real review loop: incident signals come in, behaviors are mapped to tactics and techniques, defender choke points are identified, and mitigations are prioritized. That is exactly the kind of workflow a buyer-useful security page should emphasize.

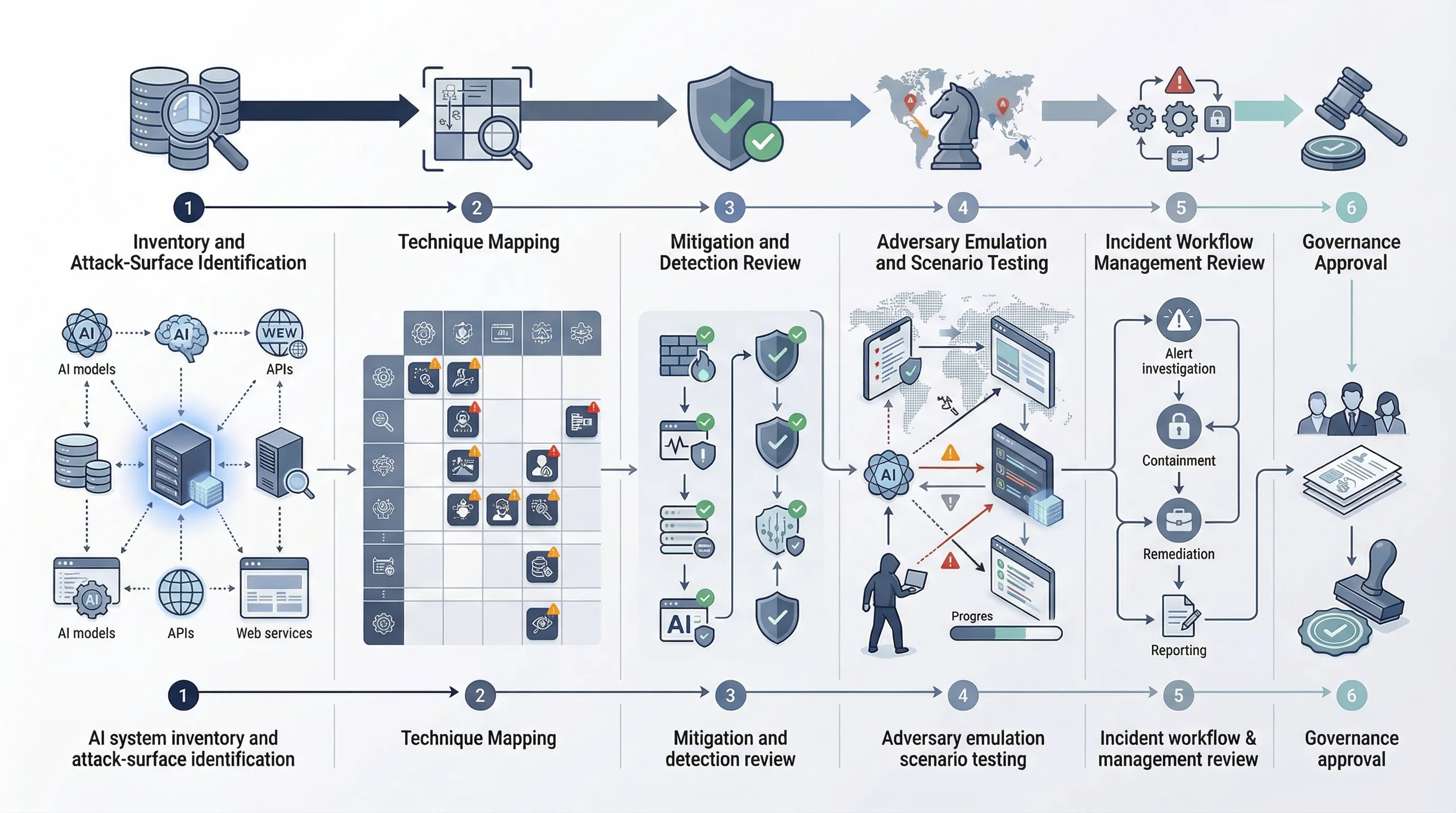

Minimum ATLAS-Based Review Workflow for SMEs

Most SMEs do not need a giant AI red-team program. They do need a threat-informed review baseline. That means taking AI-enabled systems, identifying exposed interfaces and tool use, mapping likely attacker techniques, reviewing mitigations and logging, and then validating the most important scenarios.

| Step | Action | Output |

|---|---|---|

| 1 | Inventory AI-enabled systems and exposed surfaces | Scoped review list |

| 2 | Classify autonomy, tools, external providers, and reachable actions | Attack-surface summary |

| 3 | Map likely ATLAS techniques to the system design | Technique exposure map |

| 4 | Review mitigations, gaps, and logging coverage | Mitigation gap register |

| 5 | Define incident workflow and test priority scenarios | Playbook and validation record |

This is enough to move an SME from vague AI security concern to a defensible threat-informed process.

60-Day AI Threat-Informed Defense Sprint

You do not need a sprawling research project. You need a focused sprint that converts threat language into system review and mitigations.

- Weeks 1-2: identify AI-enabled systems, classify autonomy and tool use, and document exposed attack surfaces.

- Weeks 3-4: map likely ATLAS techniques, review mitigations, and define logging and monitoring priorities.

- Weeks 5-6: run emulation or scenario tests, refine incident playbooks, and approve the AI security baseline.

The #1 failure reason is predictable: teams treat AI security as ordinary application security and ignore the distinct prompt, tool, provider, and autonomy attack paths that ATLAS is designed to surface.

Need the controls, not just the threat taxonomy? MITRE ATLAS is strongest when mapped to real AI security controls, ownership, and evidence. That is where the AI Controls Toolkit (ACT) can support structured implementation.

Run the free readiness assessment or compare AI Controls Starter and AI Controls Professional.