Why Generative AI Needs a Separate Profile

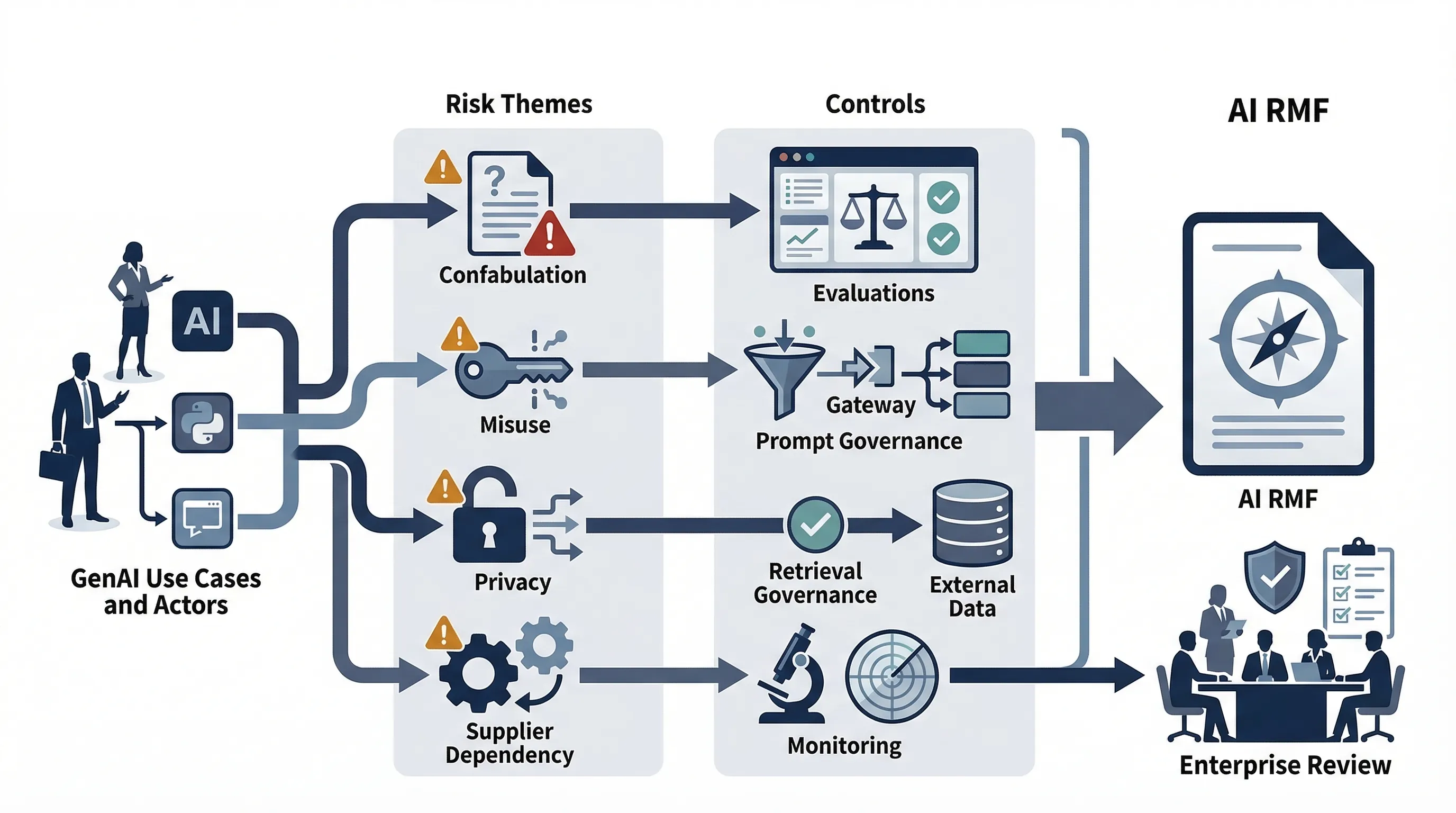

Generative AI changes the control environment because the system does not merely classify or rank. It generates content, responds probabilistically, scales to broad use, and often depends on opaque upstream models and fast-changing providers. That produces a different governance problem from traditional ML or analytics deployments.

NIST states that AI 600-1 is the Artificial Intelligence Risk Management Framework: Generative Artificial Intelligence Profile and describes it as a cross-sectoral profile of and companion resource for AI RMF 1.0 for Generative AI. That wording matters. The profile is not trying to replace AI RMF. It is trying to extend it where generative AI creates new or sharper risk patterns. See the official publication page. citeturn863847view0

NIST published AI 600-1 on July 26, 2024. The same NIST publication page also notes the report number, the DOI, and the fact that it is a NIST Trustworthy and Responsible AI publication. This is guidance, not a certifiable standard, but it is currently the strongest official NIST companion document for organizations deploying LLMs, copilots, image generators, RAG systems, and similar GenAI use cases. citeturn863847view0

Official source links: NIST AI 600-1 publication page · NIST AI RMF hub · official PDF · NIST AI 100-4 publication page

Commercially useful framing: treat AI 600-1 as the GenAI control overlay on top of AI RMF. Use it to shape policy, risk registers, vendor governance, testing, and monitoring before customers or internal audit start asking what makes your GenAI controls different from your general AI controls.

How NIST 600-1 Extends AI RMF

The AI RMF hub says the Generative AI Profile is designed to help organizations identify the unique risks associated with GenAI and proposes actions to manage those risks in ways aligned with organizational goals and priorities. That is the cleanest official summary of its purpose. See the NIST AI RMF hub. citeturn863847view1

The important point is structural. AI RMF still gives you the main frame: GOVERN, MAP, MEASURE, MANAGE. AI 600-1 extends those functions into a GenAI-specific control environment. It tells you where generative systems create additional concerns that a generic AI governance baseline will miss or underweight.

| AI RMF function | GenAI-specific extension | Example artifact |

|---|---|---|

| Govern | Define GenAI-specific policy, ownership, acceptable use, vendor governance, and escalation | GenAI policy, RACI, model approval workflow, usage guardrails |

| Map | Map use cases, actors, prompt flows, retrieval layers, and external dependencies | System inventory, architecture map, dependency register, actor matrix |

| Measure | Evaluate confabulation, harmful outputs, privacy leakage, prompt misuse, and other GenAI failure modes | Evaluation results, red-team findings, testing records, monitoring thresholds |

| Manage | Implement controls, human oversight, release gates, monitoring, and corrective action for GenAI systems | Approval criteria, incident playbook, rollback plan, corrective action log |

This matters because organizations often say they "use AI RMF" while doing nothing GenAI-specific beyond a generic policy note. AI 600-1 closes that gap.

The Core Generative AI Risk Themes

The blueprint is directionally right: GenAI changes the risk surface. In practice, the risk themes most organizations need to govern are confabulation, harmful bias, privacy and data exposure, intellectual property and copyright issues, prompt or system misuse, supply-chain dependency, human overreliance, and misconfiguration. Those themes are consistent with the role the profile plays in extending AI RMF for GenAI.

| Risk theme | Business impact | Control response |

|---|---|---|

| Confabulation | False outputs, bad decisions, customer harm, reputational damage | Evaluation, source-grounding rules, human review thresholds, user transparency |

| Harmful bias | Discrimination risk, unfair treatment, trust loss | Bias testing, use-case screening, escalation and remediation workflow |

| Privacy and data exposure | Leakage of confidential or personal information | Data-flow restrictions, prompt handling rules, vendor review, access controls |

| IP and copyright risk | Content disputes, legal exposure, blocked distribution | Use-case rules, rights review, output governance, supplier diligence |

| Prompt / system misuse | Unsafe outputs, jailbreaks, policy circumvention | Prompt governance, guardrails, testing, monitoring, response plan |

| Supplier and value-chain dependency | Upstream changes can alter risk without warning | Vendor governance, change control, fallback plan, regional/legal review |

| Human overreliance | Uncritical use of low-quality outputs | Training, review thresholds, restricted use cases, oversight rules |

The mistake is focusing only on hallucination. That is one risk theme, not the whole profile.

Common failure pattern: teams write "hallucination mitigation" into the GenAI policy and ignore supplier governance, prompt ownership, change management, and retrieval-layer risk. That is not a GenAI governance program. It is a partial memo.

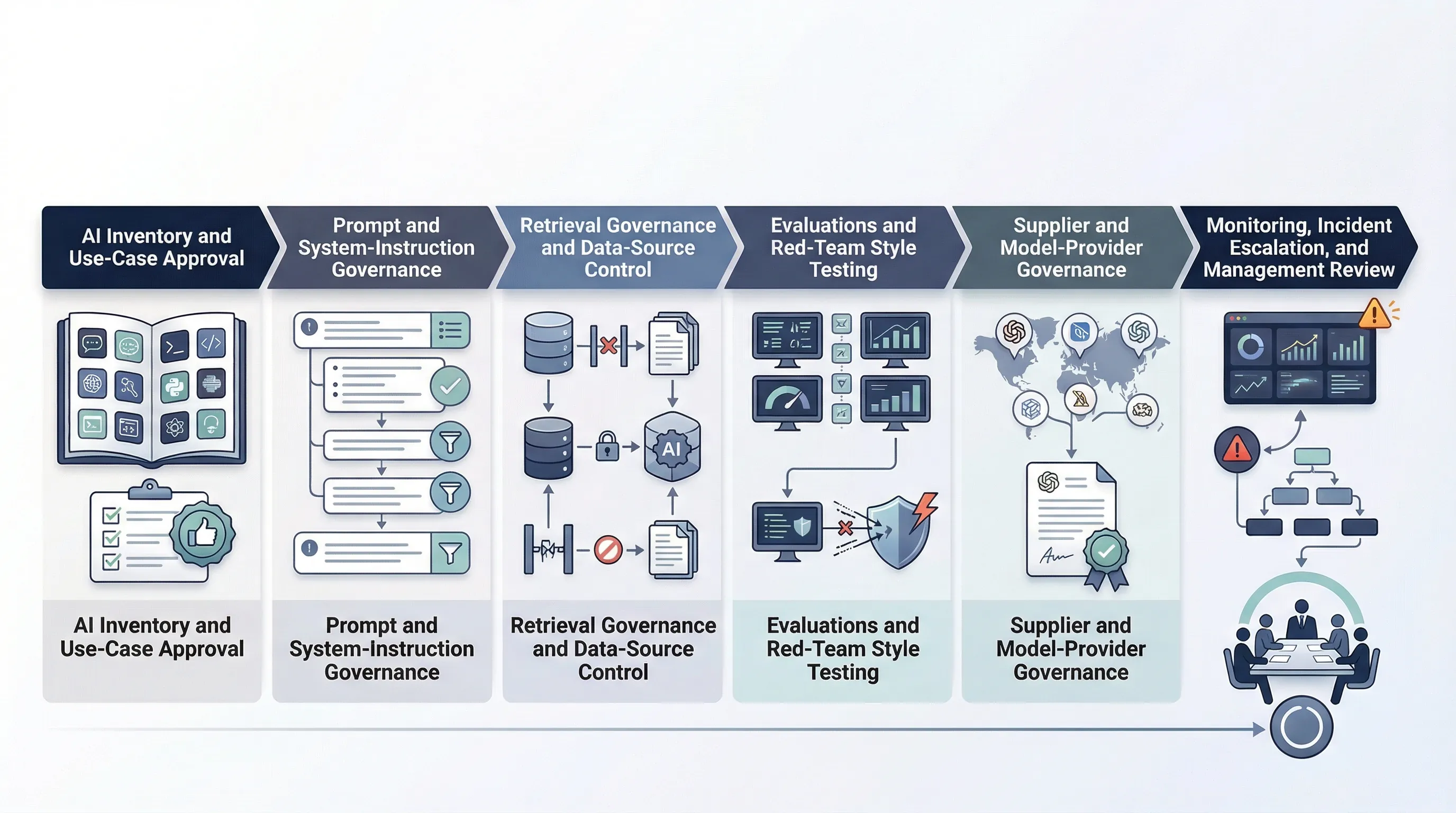

Minimum Controls for LLM, RAG, and Copilot Deployments

Most organizations do not need a research-grade assurance stack on day one. They do need a minimum GenAI governance baseline. That means use-case approval, prompt and system-instruction governance, retrieval governance for RAG, testing and evaluations, user transparency, monitoring, incident escalation, supplier governance, and human-review thresholds.

| Minimum control | Why it matters | Evidence |

|---|---|---|

| Use-case approval | Stops uncontrolled spread of GenAI into sensitive workflows | Use-case register, approval note, owner list |

| Prompt and system governance | Defines who can change core behavior and instructions | Prompt library controls, approval workflow, version history |

| Retrieval governance | RAG outputs depend on the quality and permissions of the retrieval layer | Data-source inventory, access review, retrieval policy |

| Testing and evaluations | Without evaluations, risk language is mostly guesswork | Test reports, red-team results, release checklist |

| User transparency | Reduces hidden reliance and supports trust | Disclosure language, UI screenshots, policy references |

| Monitoring and escalation | Captures failures and misuse after deployment | Monitoring dashboard, incident playbook, issue log |

| Supplier and model governance | Third-party model changes can change risk without warning | Vendor assessment, contract notes, change-control log |

That baseline is enough to make a GenAI program real instead of aspirational.

NIST AI 600-1 vs ISO 42001 vs EU AI Act

These are complementary layers, not substitutes. AI 600-1 is a GenAI-specific guidance profile. ISO 42001 is an auditable management-system standard. The EU AI Act is binding law. If you force one to do the work of the others, the program becomes distorted.

| Dimension | NIST AI 600-1 | ISO 42001 | EU AI Act |

|---|---|---|---|

| Nature | Voluntary GenAI guidance profile | Certifiable management-system standard | Binding regulation |

| Primary use | GenAI-specific risk and control overlay | Institutionalize governance, ownership, review, continual improvement | Meet legal obligations and market-access requirements |

| Main audience | GenAI governance, product, security, risk teams | Organization-wide governance owners | Providers, deployers, importers, distributors, users |

GenAI Vendor and API Governance

This is where many real failures live. Most organizations do not build frontier models. They buy APIs, hosted models, copilots, and wrappers. That means upstream changes, region handling, data retention, logging, fallback logic, and contract language matter more than teams want to admit.

A workable vendor-governance model answers six questions. Which provider is in use? What data goes where? What retention or training rights exist? What logging is available? What jurisdictional constraints apply? What happens when the provider changes the model, safety behavior, or terms? If you cannot answer those questions, your GenAI program is dependent on upstream risk you do not control and do not even monitor.

This is the part many founders underweight because the product "works." That is irrelevant. The governance issue is whether your organization can explain and evidence the dependency chain when challenged.

Synthetic Content, Provenance, and Related Controls

AI 600-1 is not enough on its own when your GenAI deployment generates or distributes synthetic media at scale. That is where NIST AI 100-4 becomes the relevant companion. AI 600-1 helps you govern the GenAI system. AI 100-4 helps you govern the provenance, labeling, detection, and review implications of the synthetic content those systems produce.

NIST AI 100-4's publication page says it covers authenticating content and tracking provenance, labeling synthetic content such as watermarking, detecting synthetic content, and auditing and maintaining synthetic-content controls. That is the right companion layer when GenAI output itself becomes a governance object. See the NIST AI 100-4 publication page.

90-Day GenAI Governance Sprint

You do not need a sprawling GenAI transformation program to get started. You need a disciplined sprint that produces ownership, controls, and evidence.

- Weeks 1-2: inventory every LLM, copilot, RAG system, agent, image generator, and third-party provider in use.

- Weeks 3-4: define use-case classes, assign policy owners, and lock prompt/system instruction governance.

- Weeks 5-7: implement evaluations, retrieval governance, user transparency, and supplier review controls.

- Weeks 8-10: test monitoring, incident escalation, fallback plans, and change-management procedures.

- Weeks 11-12: run management review, close control gaps, and approve the GenAI baseline.

If you cannot complete that in 90 days, the blocker is usually not the model. It is the absence of accountable ownership.

Need the templates, not just the concepts? NIST AI 600-1 becomes useful when it is translated into policy language, decision trees, supplier controls, evaluations, and evidence. That is what AI Controls Professional is built to support.

Compare AI Controls Starter and AI Controls Professional.