Why NIST Created a Cyber Profile for AI

Traditional cybersecurity frameworks were built for networks, identities, assets, applications, and data flows. AI systems complicate all of those. They introduce model components, training and inference pipelines, third-party model providers, prompt and retrieval surfaces, and failure modes that do not fit neatly into standard application-security language. NIST IR 8596 exists because teams needed a cleaner way to express AI system security through a CSF 2.0 structure.

NIST describes IR 8596 as the Cybersecurity Framework Profile for Artificial Intelligence (Cyber AI Profile). The draft says it will provide guidelines for managing cybersecurity risk related to AI systems and for identifying opportunities to use AI to enhance cybersecurity capabilities. It is organized around CSF 2.0 outcomes: functions, categories, and subcategories. That makes it immediately useful for security teams that already use the NIST Cybersecurity Framework but do not yet have a practical AI overlay. Read the official NIST draft page or the draft PDF.

The key limitation is maturity. NIST labels IR 8596 an Initial Preliminary Draft, published on December 16, 2025, with the note that comments received on this preliminary draft will inform a later initial public draft. That means you should not present it as settled doctrine. But draft does not mean useless. In fact, for a fast-moving topic like AI security, an official draft can be more valuable than polished generic commentary because it shows the direction NIST is taking. NIST states that status directly on the draft landing page.

Official source links: NIST IR 8596 draft page · draft PDF

Commercially useful framing: treat IR 8596 as a strong design signal for AI security governance, not as a certifiable standard. Use it to organize controls, evidence, and security reviews now, then refine when NIST publishes the next version.

How the Profile Uses CSF 2.0

IR 8596 is useful because it does not invent an entirely new structure. It rides on the CSF 2.0 model that many security teams already know. That lowers adoption friction. The profile takes AI-related cybersecurity concerns and organizes them through the familiar CSF functions, categories, and subcategories rather than forcing teams into a separate "AI-only" security universe. NIST's draft page and abstract are explicit on this point; see the official draft abstract.

NIST also highlights three focus areas in the draft: Securing AI System Components, Conducting AI-Enabled Cyber Defense, and Thwarting AI-enabled Cyber Attacks. That matters because it makes clear the profile is doing two jobs at once. It addresses AI as something you must secure, and AI as something you may use for defense. Those are related but not identical control problems. Those focus areas are listed on the NIST publication page.

| CSF function | AI-relevant cyber focus | Example evidence |

|---|---|---|

| Govern | AI security policy, roles, supplier governance, decision rights | AI security policy, RACI, vendor assessment, review cadence |

| Identify | AI asset inventory, model dependencies, system boundaries, exposure mapping | System inventory, architecture diagrams, dependency register |

| Protect | Access control, environment isolation, data protections, secure configuration | IAM rules, segmentation design, key management, hardening baseline |

| Detect | Monitoring model misuse, anomalous behavior, abuse patterns, tampering signals | Alert rules, logs, abuse dashboards, SOC runbooks |

| Respond / Recover | AI-specific incident handling, containment, rollback, restoration | Incident playbooks, rollback procedures, post-incident review |

This structure is the main reason IR 8596 is commercially useful even as a draft. It gives security teams a language they can already operationalize.

AI as a System to Protect

The first control problem is obvious but still widely mishandled: AI systems are assets that need protection. That means protecting not only the application wrapper but also models, inference services, retrieval layers, prompts, connectors, pipelines, and third-party dependencies. If your threat model stops at the web front end, it is incomplete.

For most SMEs, the main blind spots are not exotic model attacks. They are ordinary governance failures in a new context: unclear system boundaries, weak access control around model endpoints, shared credentials, untracked vendor changes, and no logging strong enough to reconstruct what happened when outputs go wrong. AI system security usually fails through control ambiguity before it fails through cutting-edge attack research.

This is where IR 8596 aligns cleanly with the practical side of AI security. Securing AI system components means you need a real asset model. Which providers are in the stack? Which model is running? What retrieval source is attached? Who can change prompts, policies, or system instructions? Which environment is production? Can you rollback if a model update breaks security or trust assumptions? If you cannot answer those questions, you do not yet have a defensible AI security posture.

Common failure pattern: teams secure the app perimeter and ignore the model supply chain, inference surface, and upstream provider changes. That is ordinary cyber debt with AI branding.

AI as a Tool for Cybersecurity

The second control problem is different: organizations increasingly want to use AI inside cybersecurity functions. NIST explicitly says the Cyber AI Profile addresses opportunities to use AI to enhance cybersecurity capabilities. That is useful, but it creates a separate governance challenge. Defensive AI can improve speed and coverage while also introducing false confidence, weak explainability, brittle automation, or overreliance by analysts. citeturn435941view0

| Cyber use case | Benefit | Risk | Governance control |

|---|---|---|---|

| SOC triage assistance | Faster alert prioritization | False confidence, missed edge cases, analyst overreliance | Human review thresholds, sampling, override rights, quality monitoring |

| Threat analysis support | Faster synthesis of large evidence sets | Fabricated analysis, weak provenance, hidden model bias | Source validation rules, analyst sign-off, prompt governance |

| Automated response recommendation | Speed in containment decisions | Unsafe action suggestions, context loss | Approval gates, bounded actions, rollback controls |

| Detection engineering support | Faster drafting of rules and playbooks | Poor-quality controls, hidden assumptions | Peer review, testing pipeline, version control, post-deployment monitoring |

The rule is simple: treat AI-enabled defense as a governed control layer, not as a magic shortcut. Human oversight, bounded autonomy, and evidence of testing remain necessary.

Cyber AI Profile vs NIST AI RMF vs ISO 42001

Do not confuse these frameworks. IR 8596 is a cybersecurity profile. NIST AI RMF is broader and more sociotechnical. ISO 42001 is a management-system standard for organizational AI governance. They overlap, but they do different jobs. Used properly, they complement each other.

| Dimension | IR 8596 | NIST AI RMF | ISO 42001 |

|---|---|---|---|

| Primary purpose | AI cybersecurity profile using CSF 2.0 | Broad AI risk governance framework | Auditable AI management system |

| Main audience | Security teams, architects, cyber governance owners | Cross-functional AI governance teams | Management-system and compliance owners |

| Primary use | Operationalize AI system security and AI-enabled cyber controls | Structure AI risk identification and treatment | Institutionalize policy, ownership, review, and continual improvement |

| Current maturity | Preliminary draft | Mature guidance framework | Published certifiable standard |

The sane implementation model is to use ISO 42001 for the management system, NIST AI RMF for broad risk framing, and IR 8596 as the cyber overlay for AI system security. That is materially stronger than trying to force one framework to do all three jobs.

Minimum Security Baseline for SMEs Using AI

Most SMEs do not need an advanced research-grade AI red-team program on day one. They do need a minimum security baseline that closes the obvious holes. If that baseline does not exist, every discussion about advanced AI cyber risk is mostly theater.

| Minimum control | Why it matters | Evidence |

|---|---|---|

| AI asset inventory | You cannot secure components you have not identified | Inventory register, architecture diagram, dependency map |

| Environment isolation | Limits blast radius and accidental cross-environment exposure | Segmentation design, environment access matrix |

| Model/provider governance | Third-party changes can alter risk without warning | Vendor assessment, change log, contract controls |

| Credential and access controls | Shared or weak access is still the fastest route to compromise | IAM configuration, privileged access review, secret management policy |

| Logging and monitoring | Without logs, incident response is guesswork | Audit trails, alert rules, monitoring dashboard, retention settings |

| Incident response and rollback | AI failures can spread quickly through integrated workflows | Playbook, escalation criteria, rollback procedure, post-incident review |

That baseline is not glamorous, but it is what makes every higher-order AI security promise believable.

60-Day AI Security Uplift Sprint

You do not need a long AI security transformation project to get started. You need a short sprint that creates structure and evidence.

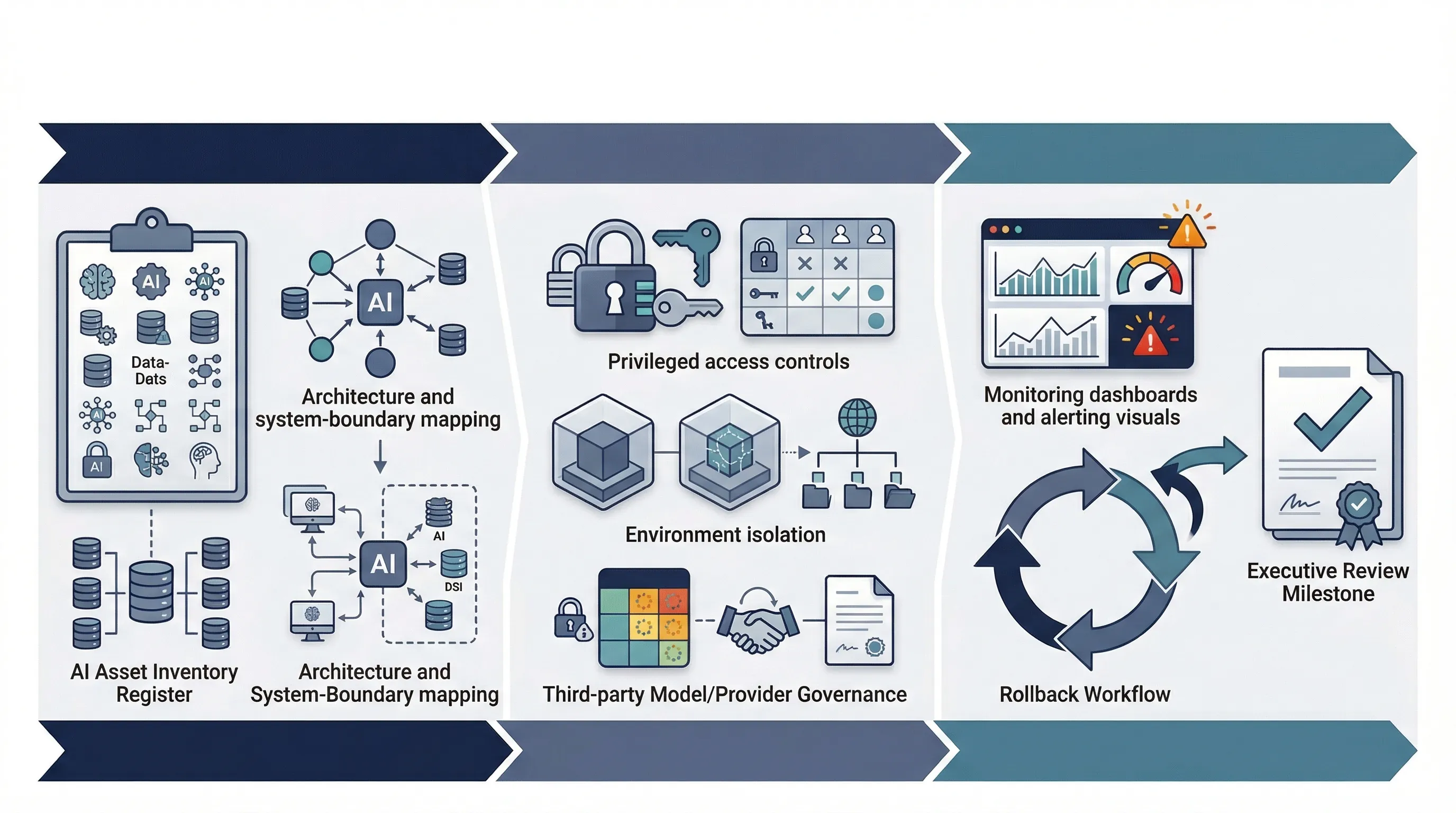

- Weeks 1-2: inventory AI systems, map architecture, identify provider dependencies, and define system boundaries.

- Weeks 3-4: tighten access controls, isolate environments, formalize supplier governance, and define monitoring requirements.

- Weeks 5-6: test incident response, define rollback plans, validate logging quality, and run a management review on the baseline.

If you cannot complete that in 60 days, the blocker is usually not technical sophistication. It is ownership ambiguity. No one owns the inventory. No one owns vendor changes. No one owns rollback. Solve that first.

Need the controls and templates? IR 8596 becomes useful when you connect it to inventory, evidence, and operating procedures. That is what AI Controls Professional is designed to support.

Compare AI Controls Starter and AI Controls Professional.