What the OECD AI Principles Are

The OECD AI Principles are one of the few global AI governance references that still matter despite the rapid expansion of harder law and sectoral rules. The OECD says they are the first intergovernmental standard on AI. It also says they promote innovative and trustworthy AI that respects human rights and democratic values. The Principles were adopted in 2019 and updated in 2024. See the OECD AI Principles page.

That matters because the Principles sit at a different level from the EU AI Act or ISO 42001. They are not binding law. They are not a certification scheme. They are a values-based and recommendations-based governance framework designed to support international cooperation and interoperability. In commercial terms, that means they are useful for positioning, policy architecture, board communication, and cross-jurisdiction governance design. They are not enough on their own to prove legal compliance.

The common mistake is to dismiss them as "too high level" and move on. That is lazy. High-level principles become valuable when you need a durable governance baseline that can survive changes in specific laws and technical patterns. The OECD AI Principles do that job better than most generic responsible-AI manifestos because they are internationally recognized and explicitly designed to be practical and flexible. The OECD uses that language directly on the principles page. Official source.

Official source links: OECD AI Principles · OECD Due Diligence Guidance for Responsible AI

Commercially useful framing: use the OECD Principles as a governance baseline and narrative layer, then connect them to ISO 42001, NIST AI RMF, and jurisdiction-specific laws for actual control design.

The Core OECD Logic of Trustworthy AI

The OECD's logic is straightforward: AI should be innovative and useful, but it must also be trustworthy and aligned with human rights and democratic values. On the official page, OECD explains that the Principles are composed of five values-based principles and five recommendations that provide practical and flexible guidance for policymakers and AI actors. That is the right way to read them. They are not abstract ethics poetry. They are a governance scaffold. Official source.

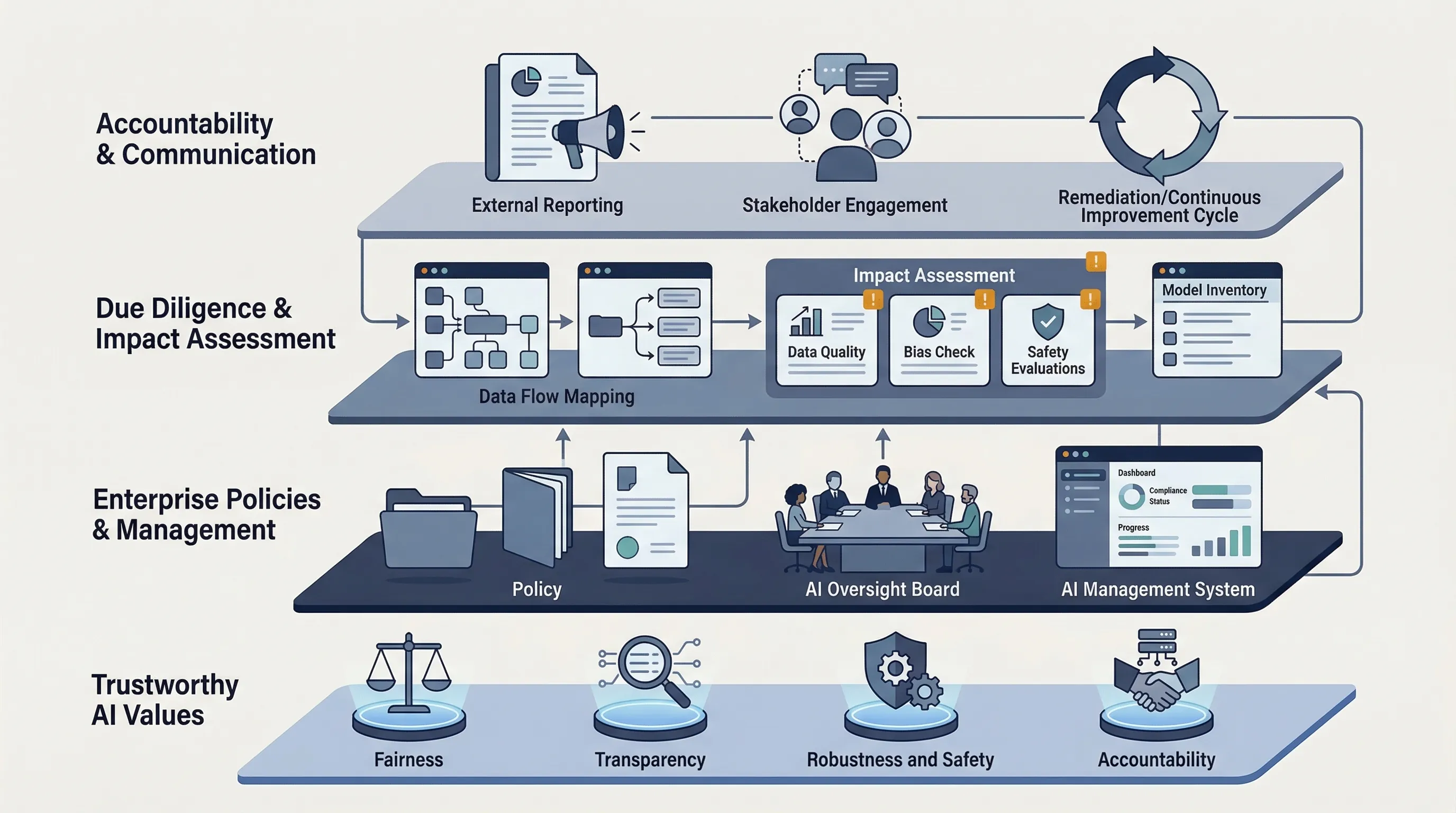

For enterprise use, the core themes break down into familiar governance concerns: beneficial outcomes, human rights and fairness, transparency and explainability, robustness and safety, and accountability. None of that is novel in 2026. The value is that the OECD packages these themes into an internationally credible baseline that can anchor policy language and executive communication across markets.

Bluntly: if you are pitching AI governance to a board, an investor, or a multinational customer, "we align to OECD AI Principles" is materially stronger than "we believe in ethical AI." One is an actual governance reference point. The other is usually filler.

OECD AI Principles to Enterprise Controls

The Principles only become useful when translated into controls. That is where most organizations stop too early. They copy the OECD language into a policy intro and never map it to owners, procedures, or evidence. That turns a credible governance source into decoration.

| Principle area | What it means operationally | Typical governance artifact |

|---|---|---|

| Beneficial and inclusive outcomes | Define intended benefits, affected stakeholders, and unacceptable harms | Use-case approval criteria, stakeholder-impact note, benefit-risk summary |

| Human rights, fairness, privacy | Screen systems for discrimination, privacy risk, and misuse of personal data | Impact assessment, privacy review, fairness testing plan |

| Transparency and explainability | Decide what users, customers, and internal teams need to know about system behavior | Disclosure language, model card, decision log, communication policy |

| Robustness, security, and safety | Implement monitoring, validation, incident handling, and secure operation | Testing report, incident workflow, monitoring dashboard, change-control log |

| Accountability | Assign responsibility for approval, deployment, oversight, and remediation | RACI, governance charter, management review minutes |

Once you do that mapping, the OECD Principles stop being "soft" and start becoming a practical governance baseline.

OECD Due Diligence Guidance for Responsible AI

This is the part most teams have not caught up with. On 19 February 2026, OECD published the OECD Due Diligence Guidance for Responsible AI. In the abstract, OECD says the report provides practical guidance to enterprises for implementing OECD standards on responsible business conduct and the OECD AI Principles when developing and using AI. That makes this publication the bridge between high-level principles and enterprise implementation. See the official publication page.

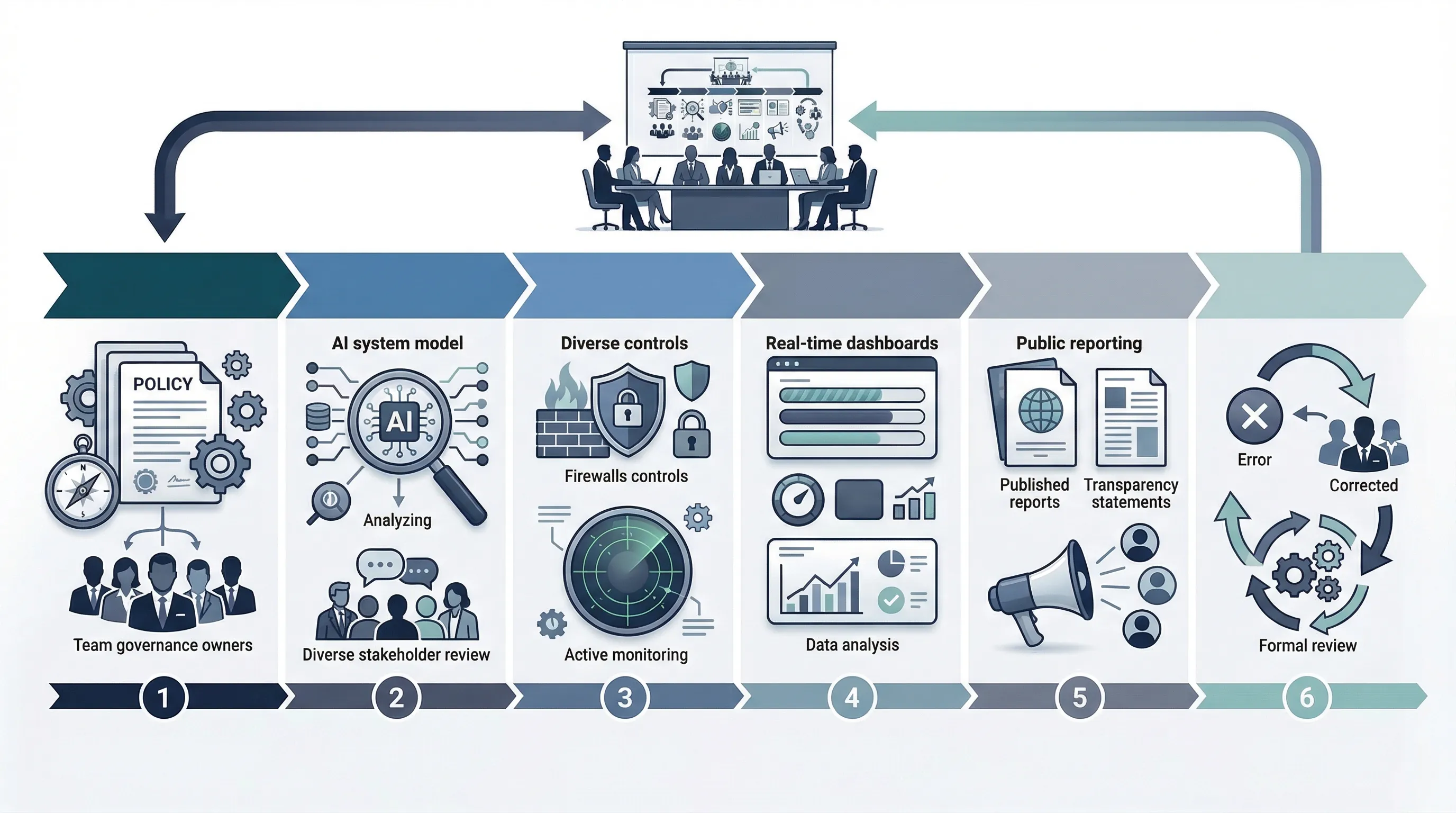

The guidance is especially useful because it frames responsible AI through a due diligence model that many governance and responsible-business teams already understand: embed into policies and management systems, identify and assess impacts, prevent or mitigate, track implementation, communicate actions, and provide for remediation where appropriate. OECD lists those due diligence steps in the executive summary of the publication. Official source.

| Due diligence step | AI governance action | Evidence |

|---|---|---|

| Embed into policies and systems | Adopt AI policy, assign roles, define governance cadence | Policy, RACI, management review schedule |

| Identify and assess impacts | Run impact and risk assessments on use cases and vendors | Impact assessment, risk register, supplier review |

| Prevent and mitigate | Implement controls, escalation paths, and monitoring | Control set, mitigation plan, incident workflow |

| Track implementation | Monitor outcomes, incidents, and control performance | Dashboards, logs, KPI reviews, audit trails |

| Communicate and remediate | Publish disclosures, respond to issues, and manage remediation | Communication templates, complaints process, remediation records |

This guidance is the main reason the OECD layer is more commercially relevant in 2026 than it was even a year ago. It now has a clearer enterprise-operating bridge.

Common failure pattern: teams cite the OECD AI Principles in slide decks but never connect them to due diligence, stakeholder engagement, or remediation. That strips out the most operationally useful part of the OECD model.

OECD vs EU AI Act vs ISO 42001 vs NIST AI RMF

These instruments do different jobs. The OECD Principles are normative and intergovernmental. The EU AI Act is binding law. ISO 42001 is an auditable management-system standard. NIST AI RMF is a broad risk-management framework. Trying to use one as a substitute for the others is a category error.

| Dimension | OECD | EU AI Act | ISO 42001 | NIST AI RMF |

|---|---|---|---|---|

| Nature | Intergovernmental principles and due diligence guidance | Binding regulation | Certifiable management-system standard | Voluntary risk-management framework |

| Primary use | Governance baseline and board narrative | Legal compliance and operator obligations | Management system and evidence discipline | Risk identification, mapping, and treatment |

| Main audience | Policymakers and AI actors broadly | Providers, deployers, importers, distributors, users | Organizations building formal AI governance | Cross-functional AI risk teams |

| Commercial role | Credibility and interoperability layer | Market-access and regulatory layer | Operational governance layer | Risk-method layer |

Board-Level Use of the OECD Principles

The board-level value of the OECD Principles is simple: they let leadership discuss AI governance in a language that is durable across jurisdictions. The Principles are useful for setting tone, defining expectations, and explaining how the organization intends to use AI responsibly without locking the board into a single technical framework or one country's law.

That makes them particularly useful for procurement statements, supplier expectations, investor communication, and executive briefing packs. They help answer the question, "what is our governing philosophy for AI?" That is a different question from "what controls do we need for this product?" Both matter. They should not be collapsed into one slide.

45-Day Governance Baseline Sprint

You do not need a long principles workshop. You need a short sprint that turns OECD language into governance infrastructure.

- Days 1-10: brief leadership, define why OECD is being used, and map the principles to internal governance themes.

- Days 11-20: map principles to policies, roles, and required evidence artifacts.

- Days 21-30: run impact and due diligence mapping for priority use cases and suppliers.

- Days 31-45: approve the governance baseline, define monitoring and remediation ownership, and issue the board-focused narrative.

If you cannot do that in 45 days, the blocker is usually not conceptual complexity. It is the absence of a governance owner willing to translate principles into operating rules.

Need the templates, not just the concepts? OECD becomes useful when it is connected to policy language, controls, evidence, and board communication. That is what AI Controls Professional is built to support.

Compare AI Controls Starter and AI Controls Professional.