Why OpenClaw is a different governance problem

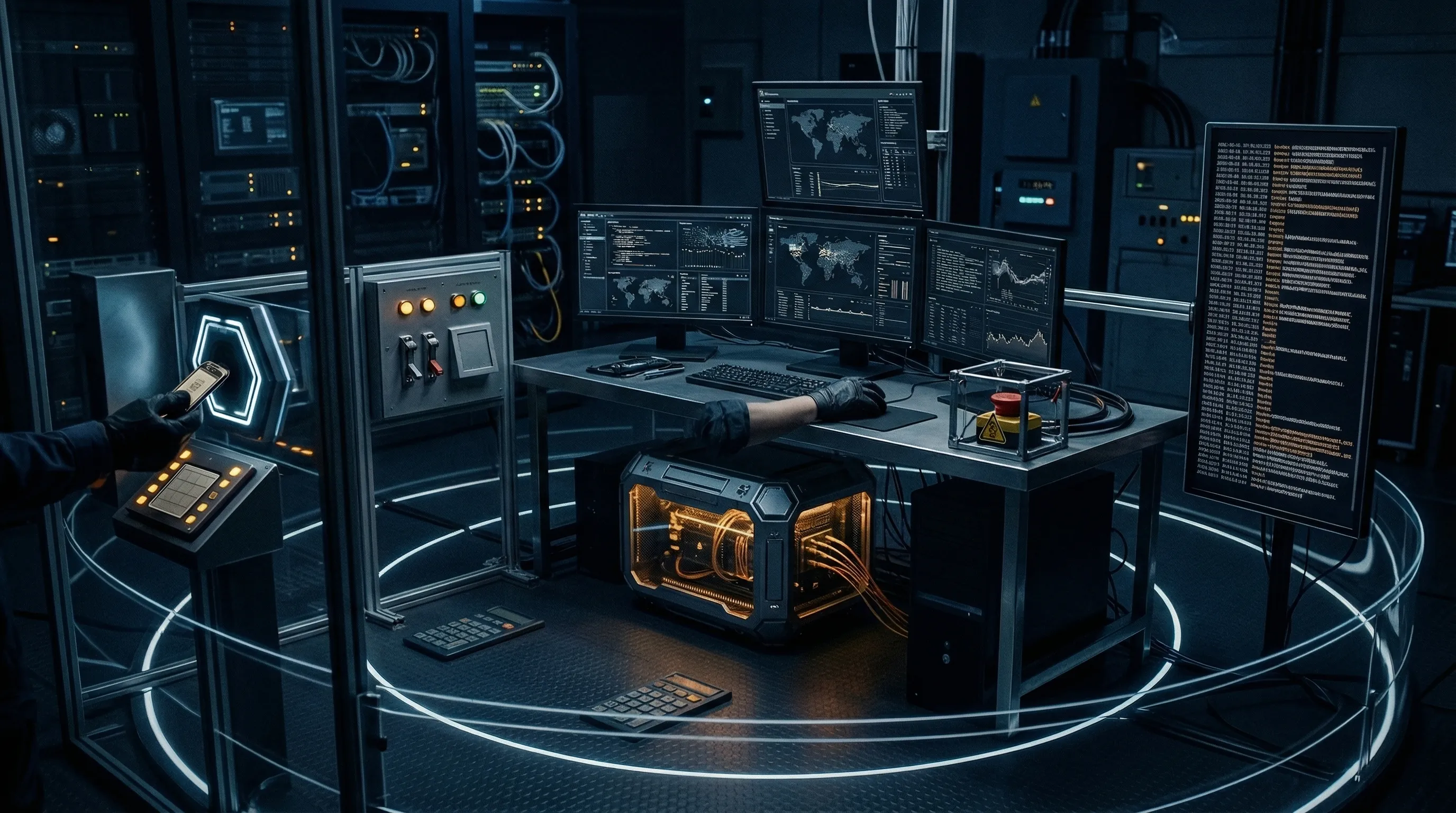

OpenClaw isn't just another chatbot wrapper. NVIDIA is pitching NemoClaw as a way to deploy always-on assistants with policy-based privacy and security guardrails, including a sandboxed runtime and local execution options. That matters because the commercial story is no longer "ask a model a question." It is "give an agent durable access to tools, memory, files, and long-running tasks."

That's a different governance problem. A normal LLM can leak data or produce bad output. An always-on agent can also open files, call tools, hold credentials, persist state across sessions, and keep acting after the original user prompt is long gone. In practice, the risk owner is no longer just the model team. It is endpoint security, IAM, IT operations, procurement, legal, and whoever approved the workflow in the first place.

Blunt version: if your review process still treats agents as "just another GenAI tool," you're under-scoping the risk. The control boundary has moved from prompt safety to system action.

- Runtime boundary

- The technical environment where the agent executes actions, including local processes, containers, shells, and attached tools.

- Autonomy boundary

- The explicit limit on what the agent may do without human approval, including financial, HR, identity, and external communications actions.

The 15 controls that matter first

You don't need a 90-control framework on day one. You do need a hard stop on the controls below. They're the minimum set I'd want in place before approving an agent that can touch enterprise systems.

| # | Control | What good looks like | Owner |

|---|---|---|---|

| 1 | Named business sponsor | One executive signs off on purpose, scope, and acceptable failure modes. | Business |

| 2 | System inventory entry | The agent, model, tools, data stores, and environments are registered before pilot go-live. | GRC / IT |

| 3 | Use-case classification | Consequential decisions, external communications, code execution, and customer data triggers are flagged. | Risk |

| 4 | Least-privilege credentials | Separate service accounts, scoped tokens, no shared admin credentials. | IAM |

| 5 | Tool allowlist | Only approved tools are callable; dangerous functions are disabled by default. | Security Engineering |

| 6 | Sandboxed runtime | Execution is isolated from unmanaged terminals, unrestricted shells, and broad file system access. | Platform |

| 7 | Data classification gate | PII, customer data, source code, and secrets are blocked unless explicitly approved. | Privacy / Security |

| 8 | Memory retention rules | Persistent context is limited, reviewable, and subject to retention and deletion rules. | Data Governance |

| 9 | Human approval thresholds | Payments, hiring actions, access changes, policy changes, and external sends require sign-off. | Business / Compliance |

| 10 | Logging and replay | Prompts, tool calls, outputs, decisions, and overrides are retained in an audit trail. | Security Operations |

| 11 | Kill switch | A clear mechanism exists to suspend the agent quickly at workflow, credential, and runtime level. | Ops |

| 12 | Red-team scenarios | Prompt injection, tool misuse, memory poisoning, credential abuse, and runaway loop tests are documented. | Security |

| 13 | Vendor due diligence | Runtime, model, plug-in, and hosting suppliers are reviewed as third parties, not treated as invisible infrastructure. | Procurement |

| 14 | Incident playbook | Detection, containment, stakeholder escalation, and evidence preservation steps are pre-written. | IR Team |

| 15 | Periodic review | Permissions, prompts, tools, performance drift, and risk classification are re-approved on a fixed cadence. | Internal Audit / Risk |

Notice what's missing: model benchmark theatre. That isn't because testing quality doesn't matter. It does. But the first enterprise failure mode for agentic systems is usually governance drift around access, approval, and hidden persistence.

Where teams usually get this wrong

The common mistake is assuming the runtime vendor solved governance for you. NemoClaw's positioning around safer deployment and sandboxed environments is useful, but it does not remove the need to classify the use case, scope credentials, define approval thresholds, or retain evidence. Vendor controls reduce technical exposure. They do not transfer accountability.

Related reading: if you need the security taxonomy behind those controls, start with OWASP Top 10 for Agentic AI and then map the gaps into your broader agentic governance program.

Minimum evidence package for review

If an internal auditor or regulator asked next week, "Why did you approve this agent?", you need a tighter answer than "engineering tested it." The minimum evidence pack should include:

- Use-case memo: objective, system boundary, prohibited actions, owner, and affected business process.

- Architecture view: model, runtime, tools, memory, data stores, and external connections.

- Permission matrix: each credential, token, tool, scope, and expiry.

- Approval matrix: which actions are autonomous, which are human-approved, and who signs off.

- Logging specification: what gets logged, where, for how long, and who can review it.

- Incident response procedure: detection sources, shutdown options, escalation contacts, and evidence preservation steps.

That evidence set aligns cleanly with the documentation, risk treatment, lifecycle, third-party, and audit expectations already sitting inside ISO 42001 and NIST AI RMF. This is exactly where a cross-framework toolkit saves time: you aren't inventing a review pack from scratch every time a new agent arrives.

A sane deployment path for SMEs

For most SMEs, the right sequence is simple. Start with one internal workflow that has bounded blast radius, no customer-facing autonomy, no financial authority, and reversible actions. Keep the first pilot read-heavy and write-light. Don't let the first project be inbox triage plus send authority, credential resets, or anything tied to employment decisions. Those look efficient on paper. They also create ugly incident narratives.

Run the pilot through your AI system inventory, classify the risk, force a named sign-off, and make the kill switch real. Then test whether your logs would actually let you reconstruct a bad decision. If not, you are not ready for broader rollout.