Why Conventional AI Governance Breaks for Agents

ISO 42001 and NIST AI RMF were written for a world where an AI system receives a request, processes it, and returns a response. One input. One output. One decision point. That model worked for chatbots, classifiers, and recommendation engines. It doesn't work anymore.

Agentic AI systems plan, act, and adapt across multiple steps. They call external APIs, write to databases, send emails, trigger purchases, and spin up sub-agents to handle delegated tasks. A procurement agent that can place orders and adjust inventory isn't just a model producing text—it's an autonomous actor with real-world consequences.

Three failure modes make conventional governance inadequate for agents. First, irreversible real-world actions: once an agent executes a wire transfer or deletes a record, there's no rollback button. Second, sub-agent delegation creates accountability gaps: if an orchestrator agent delegates a task to a sub-agent that makes a harmful decision, who's responsible? Third, persistent memory and tool chaining compound risk over time—an agent that remembers previous sessions and chains together six API calls builds up exposure that no single-interaction risk model can capture.

These aren't theoretical concerns. They're architectural realities of any production agent deployment.

- Agentic AI

- AI systems that autonomously plan, execute multi-step actions, invoke tools, and adapt behavior to achieve goals without continuous human direction.

- Bounded Autonomy

- Designing agents to operate within explicitly defined operational boundaries—constrained tool sets, scoped data access, capped authorization—with mandatory escalation for anything outside those bounds.

- Human-in-the-Loop (HITL)

- Human approval required before an agent executes a specific action. Real-time intervention, not post-hoc review.

- Human-on-the-Loop (HOTL)

- Human monitors agent behavior and can intervene, but the agent proceeds without pre-approval. Post-hoc review with override capability.

| Characteristic | Traditional AI | Agentic AI |

|---|---|---|

| Interaction model | Request → response (single step) | Multi-step planning, execution, and adaptation |

| External access | None or sandboxed | Tool calls, API invocation, database writes, external communications |

| Delegation | Not applicable | Orchestrator → sub-agent chains with task delegation |

| Memory | Stateless or session-scoped | Persistent memory across sessions, context accumulation |

| Risk surface | Output quality, bias, hallucination | All of the above plus: unauthorized actions, scope creep, cascading failures, goal hijack |

| Oversight model | Output review sufficient | Requires pre-execution checkpoints, mid-execution monitoring, audit trail across all steps |

| Incident response | Standard IR playbook | Agent-specific IR: isolation procedures, kill switches, forensic session replay |

Quick governance check: If your organization has deployed AI agents but hasn't updated your AI governance framework beyond what ISO 42001 or NIST AI RMF requires, you've got gaps.

Run the free readiness assessment to see where you stand.

The Four Active Governance Frameworks

Between January and March 2026, four distinct frameworks addressing agentic AI governance were published. Each covers different dimensions of the problem. None of them covers everything. Understanding what each does—and what it doesn't—is the foundation for building something comprehensive.

Singapore IMDA Model AI Governance Framework for Agentic AI

Released: January 22, 2026 · Status: Voluntary, non-binding guidance

IMDA's Model AI Governance Framework for Agentic AI is a voluntary government-issued reference for agentic AI governance. IMDA defines four dimensions: (1) assessing and bounding risks upfront, (2) making humans meaningfully accountable, (3) implementing technical controls throughout the agent lifecycle, and (4) enabling end-user responsibility. The emphasis on "meaningful human accountability" goes beyond traditional human-in-the-loop: someone with authority and understanding needs to be assigned and available to intervene, not just named on an org chart.

UC Berkeley CLTC Agentic AI Risk Management Standards Profile

Released: February 2026 · Status: Academic/research framework extending NIST AI RMF

Berkeley's profile introduces a crucial insight: agency is a spectrum, not a binary. An agent that suggests actions but doesn't execute them needs different governance than one that autonomously places financial trades. The two core concepts are bounded autonomy—explicit operational boundaries per agent—and defense-in-depth containment—layered controls so that if one boundary fails, the next catches it. Stronger on principles than operational procedures, but the conceptual framework is sound. It maps directly onto NIST AI RMF functions, which makes it useful for organizations that already use RMF.

OWASP Top 10 for Agentic Applications for 2026

Current reference: 2026 list · Status: Peer-reviewed, globally recognized security risk catalog

OWASP doesn't tell you how to govern agents. It tells you what can go wrong. The ten risks are: ASI01 Agent Goal Hijack, ASI02 Tool Misuse and Exploitation, ASI03 Identity and Privilege Abuse, ASI04 Agentic Supply Chain Vulnerabilities, ASI05 Unexpected Code Execution, ASI06 Memory and Context Poisoning, ASI07 Insecure Inter-Agent Communication, ASI08 Cascading Failures, ASI09 Human-Agent Trust Exploitation, and ASI10 Rogue Agents. It's a threat model input, not a governance framework. But every governance control you build should address at least one of these risks.

ISO/IEC 42001:2023 — Partial Coverage

Status: International standard for AI management systems

ISO 42001 wasn't designed for agents, and it shows. Annex A controls A.9.2 (human oversight), A.6 (AI system lifecycle), A.10 (third-party AI), and A.4.3 (tools and resources) provide a skeleton. Clause 8.4 (AI system operation) and Clause 6.1 (risk assessment) are the insertion points where you'd bolt on agentic-specific risk treatments. But there's no coverage of multi-agent orchestration, bounded autonomy specification, inter-agent trust protocols, or agent-specific incident response. If you're pursuing ISO 42001 certification and you've deployed agents, you'll need supplementary controls. The standard alone won't protect you.

Framework Coverage Comparison

This table shows where each framework provides coverage, where it's partial, and where the gaps are. The "Gap" column identifies what your organization needs to build in-house or source from a governance toolkit.

| Risk Category | IMDA | UC Berkeley | OWASP | ISO 42001 | Gap |

|---|---|---|---|---|---|

| Bounded autonomy design | ✓ Dim. 1 | ✓ Core concept | ✕ | ● A.6.1 (scope) | No mandatory boundary specification standard |

| Human accountability checkpoints | ✓ Dim. 2 | ✓ Escalation paths | ✕ | ● A.9.2 (oversight) | No trigger criteria defined for agent-specific escalation |

| Tool/API access controls | ✓ Dim. 3 | ✓ Containment | ● ASI02 | ● A.4.3 (tools) | No whitelisting or permissioning standard |

| Inter-agent communication | ✕ | ● Mentioned | ✓ ASI07 | ✕ | Critical gap. No trust protocol standard exists |

| Memory/context integrity | ✕ | ● Harm paths | ✓ ASI06 | ✕ | Critical gap. No memory governance controls |

| Agent identity/authentication | ✕ | ● Partial | ✓ ASI03 | ● A.3.2 (roles) | Identity standards for agents are absent |

| Supply chain (agent/plugin) | ● Dim. 3 | ● Partial | ✓ ASI04 | ✓ A.10 | Audit trail requirements weak for agent ecosystems |

| Incident response (agent-specific) | ● Dim. 3 | ✕ | ✕ | ● A.6.9 | Agent-specific IR procedures not defined anywhere |

✓ = full coverage ● = partial coverage ✕ = not addressed

Bottom line: No single framework covers all eight risk categories. OWASP is strongest on security threats but doesn't provide governance controls. IMDA and Berkeley are strongest on governance principles but leave security implementation to you. ISO 42001 provides the management system skeleton but has no agent-specific muscle. You need to layer all four, not choose one.

What a Complete Agentic AI Governance Framework Looks Like

After mapping the four frameworks against each other, what emerges is a six-domain governance model that addresses the gaps none of them individually covers. This isn't theoretical—these are the domains any organization deploying agents needs to implement.

1. Agent Inventory and Classification

Every agent must be documented: its purpose, autonomy level (L1 assistive through L5 fully autonomous), which tools and APIs it's authorized to call, what data it can access, and who's responsible for it. Classification triggers governance requirements—a higher autonomy level means more oversight, more logging, and faster escalation paths. If you don't know what agents you've deployed, you can't govern them. This maps to ISO 42001 Clause 8.1 (operational planning), Annex A.3.1 (roles), and NIST AI RMF GOVERN 1.1.

2. Bounded Autonomy Design

Policy must specify: maximum financial authorization per transaction, maximum data records accessible per session, an approved API and tool whitelist, and maximum task duration before mandatory human review. Design boundary violations as hard stops, not soft warnings. If the agent can override its own boundaries, they aren't boundaries. This is the core principle from Berkeley's profile, operationalized into auditable controls.

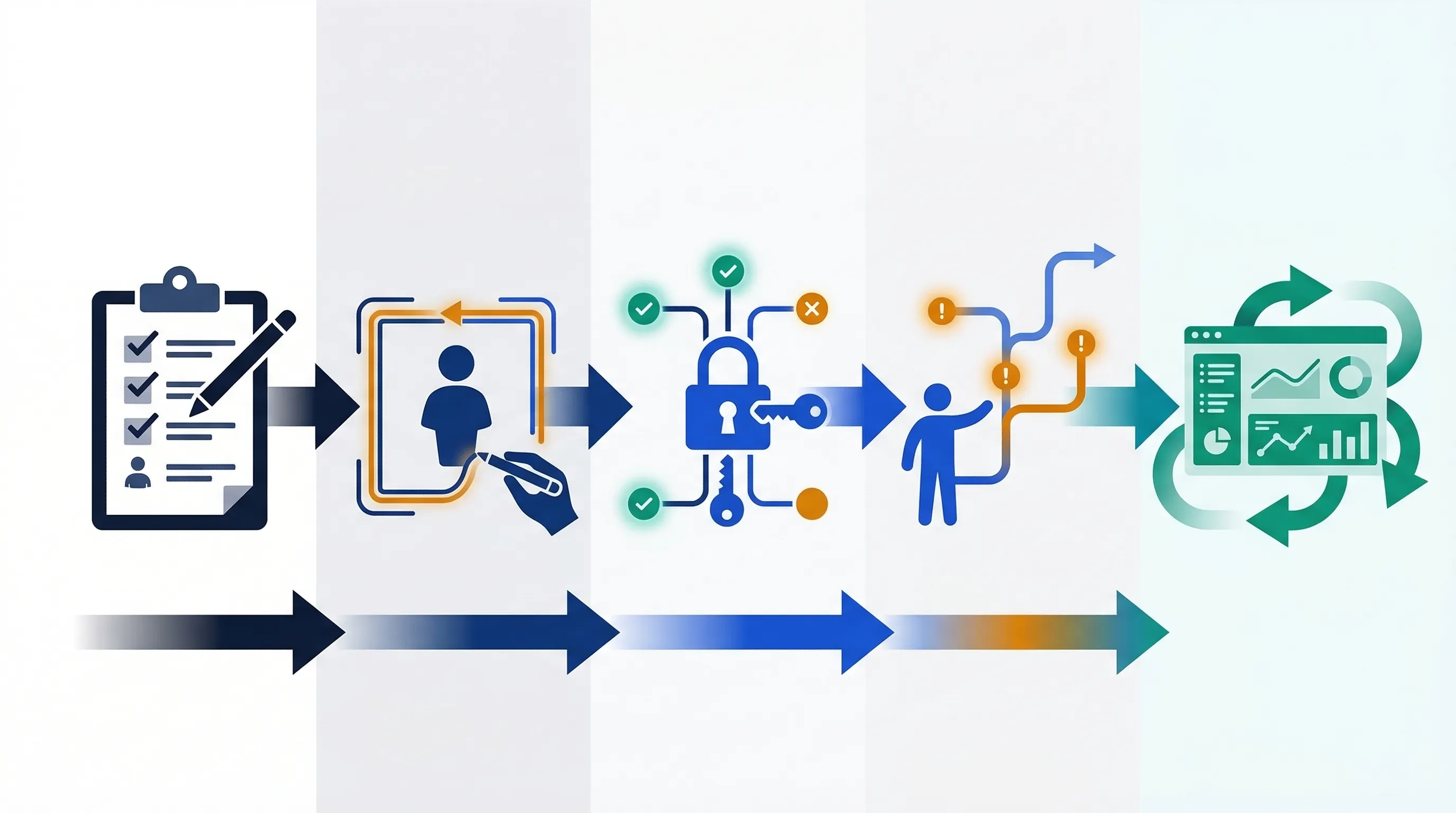

3. Human-in-the-Loop Escalation Controls

Three checkpoint types are needed. Pre-execution checkpoints for irreversible actions: financial transactions, external communications, data deletion. Mid-execution pause triggers for unexpected behavior: anomalous API call volumes, tool calls outside the whitelist, scope creep indicators. Post-execution review requirements: audit log review cadence, anomaly reporting thresholds, override documentation. The IMDA framework's emphasis on "meaningful" accountability applies here—the human reviewer must have the technical understanding and organizational authority to actually override the agent.

4. Tool and API Access Controls

Deny-by-default. Every tool and API the agent can access must be explicitly whitelisted, with role-based permissions tied to the agent's identity—not a shared service account. Quarterly access reviews. This addresses OWASP ASI02 (tool misuse) and ASI03 (privilege abuse). In my assessment, this is where most organizations fail first: they give agents broad production credentials because it's faster than configuring granular permissions.

5. Inter-Agent Communication Governance

If you're running multi-agent pipelines, agents shouldn't blindly accept task delegation from other agents. Require agent authentication before accepting instructions. Log all inter-agent messages with timestamps, source agent ID, target agent ID, and the action requested. This is the gap that no current framework addresses adequately—OWASP flags it as ASI07, but nobody's published implementation guidance yet.

6. Audit Logging and Incident Response

At minimum: log every tool call with parameters and responses, every human approval or override event, every external data read or write, and every output artifact. Agent-specific incident categories you'll need: goal hijack, unauthorized tool invocation, scope boundary breach, rogue behavior, and cascading failure across agent chains. Your incident response playbook must include an agent isolation procedure—a kill switch or circuit breaker that can halt the agent mid-execution without corrupting the systems it's connected to.

| Governance Domain | Minimum Controls (Any Agent) | Enhanced Controls (Autonomy L3+) | ISO 42001 Anchor | NIST AI RMF Anchor |

|---|---|---|---|---|

| Agent inventory | Registry with autonomy classification | Classification-triggered review cycle | A.3.1, A.6.1 | GOVERN 1.1 |

| Bounded autonomy | Documented scope per agent | Hard technical enforcement + policy | A.6.1, A.9.2 | MAP 2.2 |

| Human checkpoints | Defined for irreversible actions | Risk-scored checkpoint matrix | A.9.2 | GOVERN 5.1 |

| Tool/API access | Whitelist documented | Dynamic access review quarterly | A.4.3, A.10.1 | MANAGE 2.2 |

| Inter-agent comms | Authentication + logging | Signed instruction chains | — | — |

| Audit/IR | Tool call + override logs | Full session replay + automated alerts | A.6.9 | MEASURE 2.5, MANAGE 3.1 |

Need this as a working implementation tool? The AI Controls Professional includes an Agentic AI Governance Module with policy templates, control checklists, and a board reporting pack covering all six domains.

Where Existing Frameworks Fall Short

Three critical gaps remain unaddressed by any published framework as of March 2026.

- Inter-agent trust protocols. No standard defines how agents should authenticate each other, validate delegation authority, or refuse instructions from compromised orchestrator agents. OWASP flagged it (ASI07). Nobody has published the solution.

- Dynamic tool-chaining governance. Agents that discover and chain tools at runtime—rather than using a static whitelist—introduce risk that static governance documents can't address. The governance framework needs to handle cases where the agent's tool set changes during execution.

- Continuous-learning agents. Agents that update their behavior based on production feedback create a moving governance target. The agent you validated last Tuesday isn't the same agent running today. ISO 42001 Clause 10.2 (continual improvement) and NIST AI RMF MEASURE functions provide anchors, but neither specifies how to track behavioral drift in autonomous systems.

Filling these gaps requires overlaying the IMDA and Berkeley governance concepts onto your existing management system, implementing OWASP mitigations as technical controls, and building agent-specific procedures that go beyond what the standards currently require.

The Digital Contractors Metaphor

One mental model that's gaining traction—originally proposed by EWSolutions—treats AI agents as "digital contractors." Like a human contractor, each agent has a defined scope of work, must request specific permissions, operates under oversight, and has accountability assigned to a human "contract manager."

This metaphor works for non-technical executives who need to understand agent governance without reading Annex A controls. A sales agent that can only read CRM data and must request approval before creating a discount over 10% is easy to explain through this lens.

But the metaphor has real limits. Agents can't be sued. They don't understand contractual intent. They can misgeneralize their goals in ways that no human contractor would. And they require much tighter technical constraints than human contractors because they don't have professional judgment or ethical reasoning to fall back on when the rules don't cover a situation. Use the metaphor for board communication. Don't use it as an architecture guide.

Board-Level Reporting on Agentic AI Risk

If your organization deploys agents and the board hasn't seen a risk report, that's a governance failure—not an oversight. Here are the metrics that matter.

- Inventory metrics: total agents in production, distribution by autonomy level (L1 through L5), agents added and retired in the reporting period.

- Operational metrics: human escalation events over the past 30 days, bounded autonomy violations (agents that attempted actions outside approved scope), tool/API calls blocked versus approved.

- Risk metrics: open agent-related incidents by category (goal hijack, unauthorized tool invocation, scope breach, rogue behavior), agents operating without defined human checkpoints, agents without audit logging.

- Compliance alignment: percentage of agents mapped to ISO 42001 Annex A controls, NIST AI RMF GOVERN/MANAGE coverage, and progress against IMDA four-dimension baseline.

The AI Controls Professional Board Reporting Pack includes a pre-built agentic AI risk dashboard template, sample narrative structure, and the metrics framework described above. See what's included →

Implementation Roadmap for SMEs

This isn't a 12-month enterprise program. For an SME with 10–250 employees deploying 3–10 agents, you're looking at 11 weeks to reach a defensible governance baseline.

Phase 1: Inventory and Classification (Weeks 1–2). Catalog every agent in production and development. Assign autonomy levels. Identify tools, APIs, and data sources each agent accesses. Assign a responsible human owner to every agent. If nobody knows who owns an agent, shut it down until you figure it out.

Phase 2: Bounded Autonomy Definition (Weeks 3–4). For each agent, document what it's allowed to do. Specify financial limits, data access boundaries, approved tool lists, and maximum task durations. Write the boundary policy first, then enforce it technically. If you do it the other way around, you'll have technical controls that nobody can audit.

Phase 3: Tool and API Access Controls (Weeks 5–7). Implement deny-by-default permissioning. Tie API credentials to agent identity, not shared service accounts. Set up quarterly access reviews. This takes longest because it usually requires coordination with your engineering team and changes to credential management.

Phase 4: Human-in-the-Loop Integration (Weeks 8–9). Define which actions require pre-approval. Set up escalation workflows. Test the override process end-to-end—don't just document it, actually run a simulation where a human has to stop an agent mid-execution.

Phase 5: Audit, Monitoring, and Board Reporting (Weeks 10–11 and ongoing). Deploy logging for all agent actions. Create agent-specific incident response procedures. Build the board dashboard. Run your first board report. Then set a quarterly review cadence.

Ready to implement? The AI Controls Professional includes a 6-month implementation project plan, policy templates, and the Agentic AI Governance Module covering all five phases. The AI Controls Starter provides the unified controls matrix if you want to start with assessment and gap analysis before full implementation.