What the Korea AI Basic Act Is - and What It Is Not

South Korea's AI Basic Act matters because it moves Korea out of the "policy discussion" category and into the "governance reality" category. The Act took effect on 22 January 2026, as recorded in the official Korean legal database. That means firms can no longer treat Korea as a watchlist jurisdiction only. It is now an operating jurisdiction for AI governance. See the MSIT announcement and the official law entry.

But don't make the lazy mistake of describing it as an EU AI Act clone. It isn't. The Korea AI Basic Act is a framework law aimed at strengthening national AI competitiveness while building a trustworthy AI foundation. That makes it structurally different from the EU AI Act's full risk architecture, conformity logic, and operator-obligation model. Korea's law is more about setting a national governance frame, creating trust expectations, defining higher-scrutiny concepts, and enabling implementation through lower statutes and guidance.

The practical consequence is that compliance teams need to read the law correctly. If you expect a one-for-one list of EU-style prohibited practices, detailed annex logic, and immediate conformity-assessment machinery, you'll misclassify the Korean market. The better way to frame it is this: Korea now has a formal AI governance regime, and companies need to show they can operate within a trustworthiness-centered control environment as the implementation layer matures.

This is why the Enforcement Decree and transparency-guidance layer matter. The Act creates the top-level structure. The decree and related guidance tell companies how that structure will be made operational in practice, and the transparency provision includes a one-year grace period before administrative fines are imposed. That is the signal that Korea is moving from legislative passage to implementation detail. If your product roadmap includes Korea, you should be preparing before customers or procurement teams start asking for proof. The relevant notice is on the MSIT site here.

Official source links: MSIT AI Basic Act announcement · MSIT draft Enforcement Decree notice · official law database entry

Need one cross-framework workflow? The AI Controls Starter gives you the inventory, gap analysis, and evidence structure to classify Korea-facing AI services alongside ISO 42001 and NIST AI RMF.

Run the free readiness assessment to see where your current controls break.

The Policy Logic of the Act

Korea is trying to do two things at once. First, it wants to accelerate AI development as a national competitiveness issue. Second, it wants to create a trust-based foundation so the market can scale without pretending AI risk is somebody else's problem. That dual objective is visible in the official MSIT framing: the law is about national governance, industrial support, and safe and trustworthy infrastructure for high-risk AI and generative AI.

That matters commercially because Korea is not regulating from a purely punitive posture. It is using the law to shape the market. The Act supports R&D, standardization, training data policy, SMEs, startups, AI clusters, and AI data centers. At the same time, it defines high-risk AI and generative AI as governance targets and establishes transparency, safety, and operator-responsibility concepts. So the buyer question is not just "what am I prohibited from doing?" It is also "what governance posture must I show if I want to be seen as a serious operator in Korea?"

For SMEs, that means the winning move is not to wait for every implementation detail to settle before acting. The winning move is to build a credible trustworthiness baseline that can absorb those details as they harden. If you already have ISO 42001 or NIST AI RMF work underway, this is manageable. If you don't, then Korea becomes another proof point that a no-governance operating model is no longer defensible.

- Framework law

- A law that establishes national governance direction and obligations at a higher level, leaving significant implementation detail to decrees, guidance, and follow-on instruments.

- Trustworthy AI foundation

- The policy expectation that AI adoption must be accompanied by transparency, safety, and operator responsibility rather than pure growth messaging.

- High-risk AI

- A category the law explicitly recognizes as needing stronger scrutiny, even if Korea's structure differs from the EU's specific risk architecture.

- Implementation layer

- The lower statutes, decree details, procedures, and guidance that make a framework law operational for companies in practice.

Trustworthy AI and High-Impact Use Cases

One of the most important signals in the Act is that Korea is not treating AI trustworthiness as optional branding language. It explicitly ties the law to transparency, safety, and operator responsibilities. That gives you the core of the enterprise compliance translation even before you get into line-by-line implementation rules.

In practical terms, this means Korean market readiness is going to reward companies that can show they know which AI systems matter, where higher-impact use cases exist, who owns the risk decisions, and how transparency and safety are handled. A company that cannot explain its AI inventory, use-case classifications, and operating controls is going to look immature fast.

| Theme | What the law signals | Practical compliance implication |

|---|---|---|

| Trustworthiness | AI growth must be paired with credible safeguards | Document policy, accountability, monitoring, and review instead of relying on marketing claims |

| High-risk AI | Certain uses need stronger scrutiny | Classify systems by impact and escalation thresholds before deployment |

| Generative AI | GenAI is not treated as a generic software tool | Apply dedicated controls for outputs, user transparency, and operational oversight |

| Operator responsibility | Responsibility does not disappear because a third-party model is used | Assign named owners for product, legal, vendor, and monitoring functions |

The blunt version: if you are shipping a Korea-facing AI product and still operating on "the model vendor handles safety," your governance model is weak. The law's center of gravity is not technical novelty. It is accountable operation. That applies whether the system is built internally, procured, or wrapped around an external API.

Governance Requirements for Companies

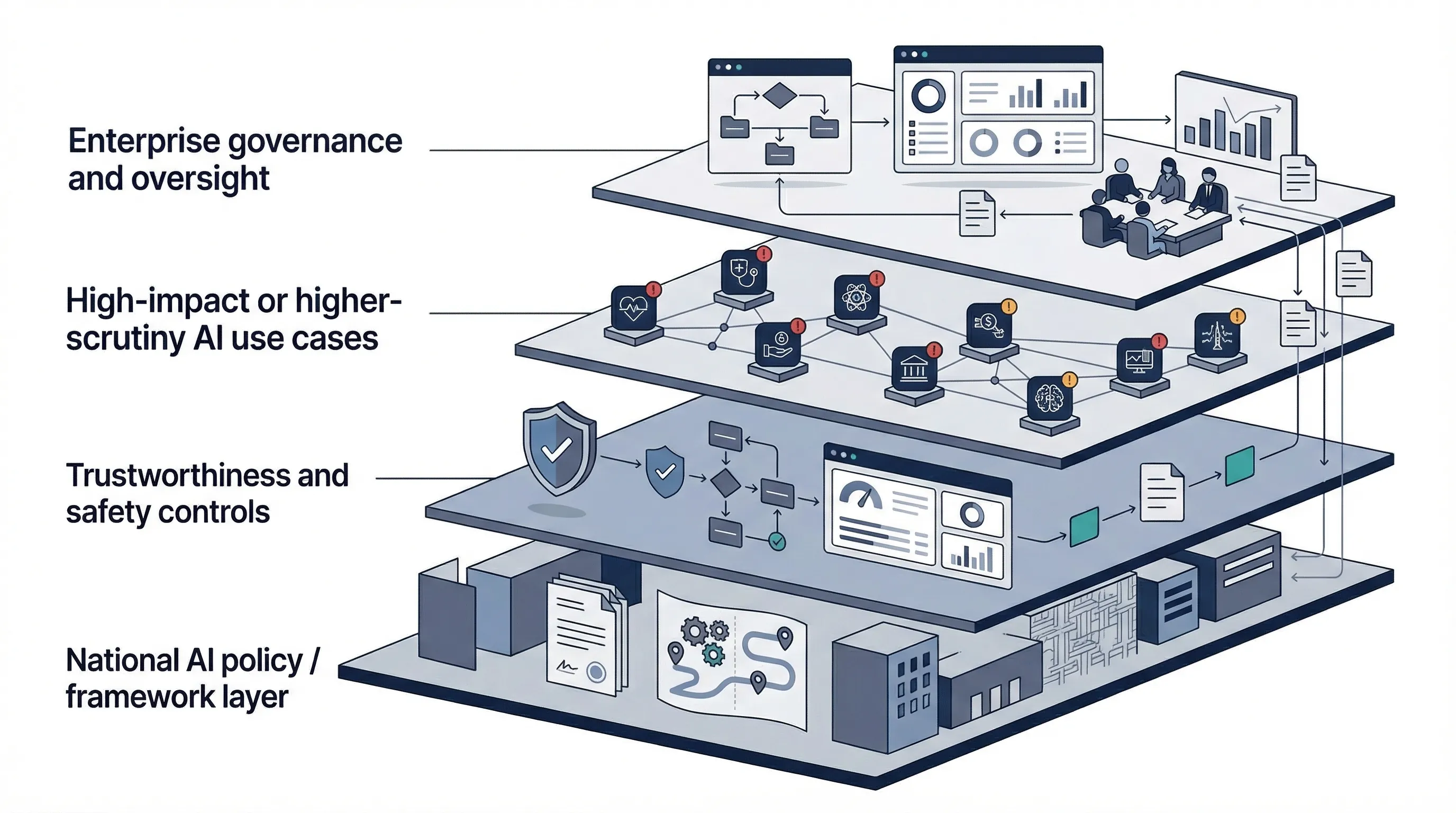

Even before every detail is final, the governance direction is clear enough to act on. Companies need an ownership model, internal policies, review mechanisms, and a way to classify and monitor AI systems that create trust, safety, or public-impact concerns. That is why this page should not be read as a legal summary only. It should be used as a governance design brief.

For most organizations, the minimum viable model has five components: board or executive accountability, named internal roles, documented policies, system classification and monitoring, and supplier governance. That is enough to begin building a Korea-ready evidence stack. It also maps cleanly to ISO 42001 and NIST AI RMF, which means you do not need a separate standalone governance universe for Korea if your baseline is well designed.

| Governance artifact | Owner | Evidence |

|---|---|---|

| AI policy and trustworthiness statement | Executive sponsor + compliance lead | Approved policy, communication record, version history |

| AI system inventory and classification | Product owner + governance owner | Inventory register, impact classification sheet, review record |

| Role allocation and escalation matrix | Management team | RACI, escalation workflow, committee terms of reference |

| Monitoring and incident workflow | Operations + legal + security | Monitoring SOP, incident log, corrective action record |

| Third-party model and vendor governance | Procurement + engineering + compliance | Vendor assessment, contract review notes, change-management log |

This is also where a lot of SMEs fail. They try to solve trust with a policy PDF and no operating mechanism. Korea, like every serious market, will punish that eventually. Governance means ownership, review, evidence, and change control. Not just intent.

Common failure pattern: companies say they are "aligned to trustworthy AI" but cannot show who owns high-impact classification, when management reviews AI risk, or how supplier changes are tracked. That is governance theater, not governance.

Korea AI Basic Act vs EU AI Act

The comparison only becomes useful if you stop trying to force symmetry. The EU AI Act is a full horizontal regulation with defined operator roles, prohibited practices, high-risk AI categories, and conformity logic. Korea's AI Basic Act is a national framework law with a stronger policy-development posture and a trust-based foundation approach.

| Dimension | Korea AI Basic Act | EU AI Act |

|---|---|---|

| Legal design | Framework law | Comprehensive regulation |

| Policy emphasis | AI growth plus trustworthiness foundation | Risk-based obligations and market governance |

| Risk architecture | Higher-level signal with implementation detail maturing | Explicit multi-layer risk structure |

| Conformity logic | Not an EU-style full conformity regime | Core element for high-risk AI contexts |

| Suggested first implementation step | Trustworthiness governance baseline | Role and risk classification |

The commercial implication is clear. If you sell into both the EU and Korea, run separate front-end compliance logic into one shared evidence system. Korea starts with trustworthiness and governance readiness. The EU starts with role and risk classification. If you use one flat checklist for both, you will either oversimplify Korea or overcomplicate the EU.

Korea AI Basic Act vs ISO 42001 and NIST AI RMF

This is where the page becomes commercially useful instead of merely interesting. The Act tells you the market signal. ISO 42001 and NIST AI RMF tell you how to operationalize it. If you already maintain an AI inventory, policy framework, risk assessment process, management review, and monitoring workflow, you are not starting from zero for Korea. You are adapting an existing governance system to a new jurisdictional expectation.

| Korea requirement theme | ISO 42001 | NIST AI RMF | Suggested evidence |

|---|---|---|---|

| Leadership and accountability | Clauses 5.1-5.3 | GOVERN | Policy approval, RACI, committee charter, management review minutes |

| Risk and impact management | Clauses 6.1, 8.2-8.4 | MAP / MEASURE / MANAGE | Risk register, impact assessment, treatment plan, monitoring thresholds |

| Transparency and trustworthiness | Annex A controls + documented information | GOVERN / MAP | Use-case rules, stakeholder communication, user disclosures, review logs |

| Supplier and model oversight | Operation + support controls | GOVERN / MANAGE | Vendor assessment, contracts, change-control log, fallback plan |

That is the efficient route. Build one operating model that can satisfy multiple jurisdictions and standards, then tune the front-end interpretation per market. Korea does not require you to reinvent governance. It requires you to stop pretending informal governance is enough.

90-Day Korea Readiness Plan

You do not need a year-long transformation to get to a credible baseline. You need an inventory, ownership, a classification method, policy discipline, and evidence.

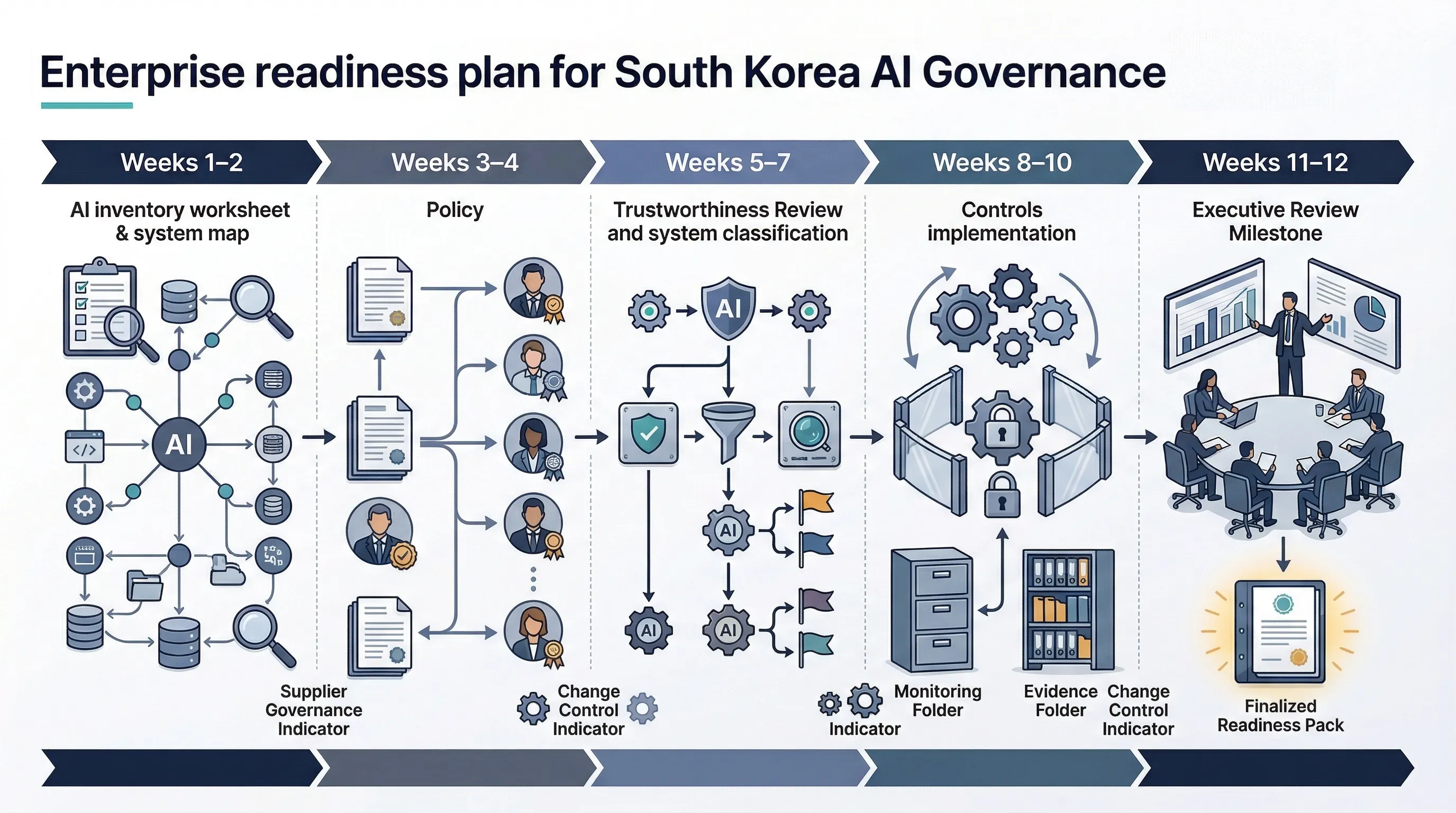

- Weeks 1-2: build the AI inventory and identify every Korea-facing product, feature, model dependency, and public-impact use case.

- Weeks 3-4: assign executive and operational ownership, publish a minimum governance policy, and define trustworthiness review criteria.

- Weeks 5-7: classify higher-impact systems, review GenAI use cases, and map transparency and monitoring controls.

- Weeks 8-10: implement supplier governance, change control, incident workflow, and evidence retention.

- Weeks 11-12: run management review, test escalation paths, and finalize the Korea readiness evidence pack.

If you fail to do this in 90 days, the blocker is usually not legal complexity. It is ownership ambiguity. No accountable sponsor. No policy owner. No one tracking vendor changes. No one signing off on high-impact use cases. Fix the operating model and the legal mapping becomes manageable.

Need the templates, not just the theory? Korea readiness becomes real when you can show policy, ownership, and evidence. That is an AI Controls Professional problem, not just an AI Controls Starter spreadsheet problem.

Compare AI Controls Starter and AI Controls Professional.