What ISO 42001 Is — and What It Is Not

ISO/IEC 42001:2023 defines the requirements for establishing, implementing, maintaining, and continually improving an AI Management System (AIMS). It is a certifiable international management-system standard for AI governance. That distinction matters: NIST AI RMF is a framework you can align to, while ISO/IEC 42001 can support an audit and certification route when implemented and assessed by competent parties.

The standard follows the same Harmonized Structure (Clauses 4 to 10) as ISO 27001 and ISO 9001, which means organizations with existing management systems can reuse significant portions of their documentation. Roughly 50–60% of the procedural infrastructure transfers directly if you've already got ISO 27001.

But here's what ISO 42001 is not. It isn't an AI ethics statement. It doesn't tell you which algorithms to use. It doesn't set technical performance benchmarks. And it doesn't replace sector-specific regulations like the EU AI Act or Colorado AI Act. What it does is give you a management system that forces structured risk assessment, documented controls, ongoing monitoring, and continuous improvement for every AI system within your defined scope. The standard uses "shall" for mandatory requirements (Clauses 4–10) and "should" for Annex A guidance—but don't interpret "should" as optional. Auditors expect evidence for every applicable Annex A control, and exclusions must be justified in your Statement of Applicability.

One more clarification before we get into the clauses. ISO 42001 covers the management system around your AI, not the AI itself. It won't validate your model's accuracy or tell you whether your training data is representative. What it does is ensure you have structured processes for assessing those questions, acting on the answers, documenting what you did, and improving over time. That distinction matters when you're explaining the standard to your engineering team—they'll want to know whether this is "yet another compliance exercise" or something that actually changes how they work. The answer is both.

Already have ISO 27001? You're 50–60% of the way there. The shared Harmonized Structure means your document control, internal audit, management review, and corrective action processes transfer directly. The new work is AI-specific: risk assessments, impact assessments, Annex A controls, and lifecycle management.

Run the free assessment to identify your specific gaps.

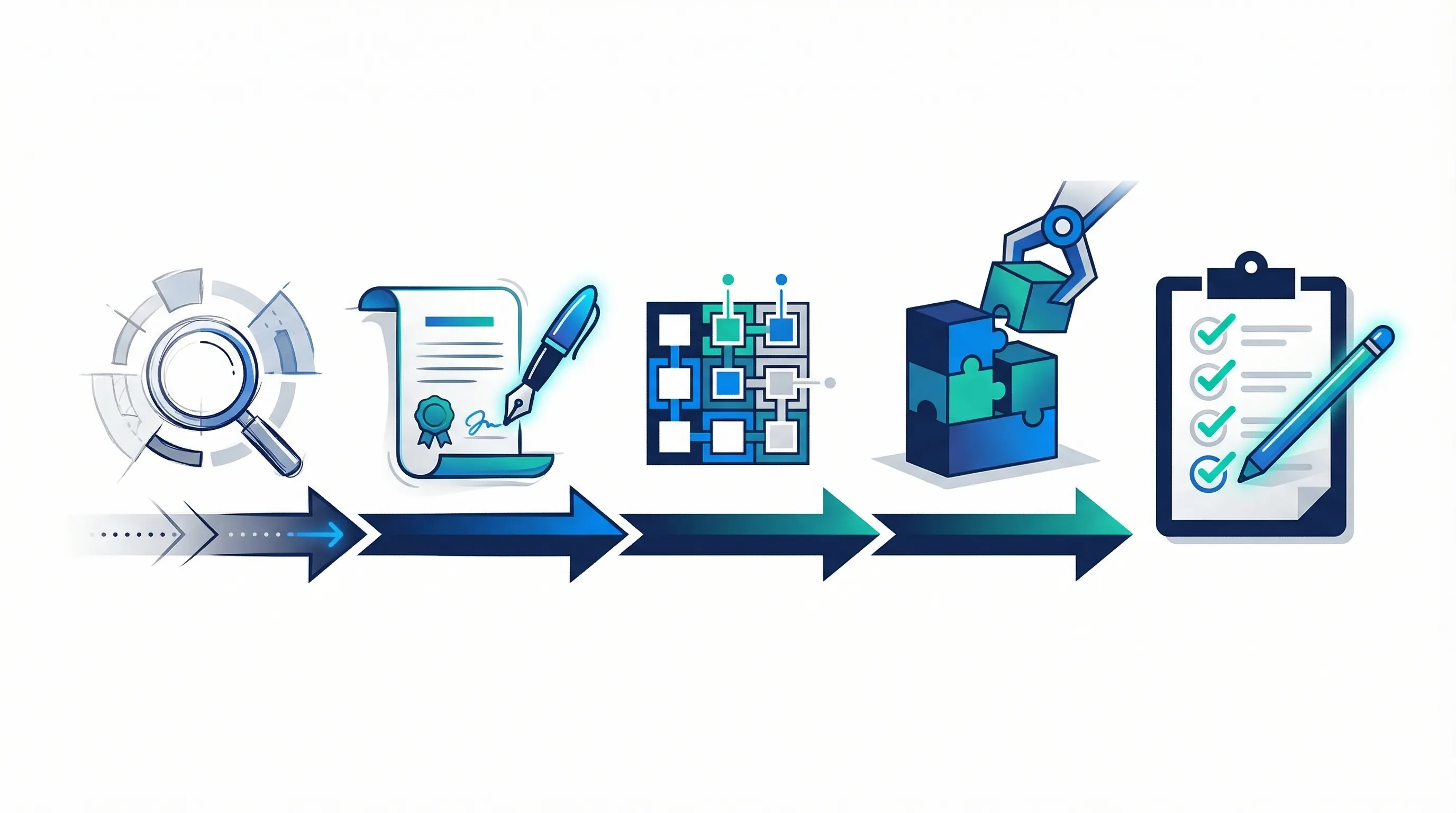

The Implementation Sequence — Clauses 4 to 10

Implementation follows the standard's own clause sequence. Each clause builds on the previous one, so skipping ahead creates gaps that auditors will find. Here's what each requires in practice.

Clause 4 — Context of the Organization

Define your AIMS scope: which AI systems, which business units, which locations. Identify interested parties (regulators, customers, employees, affected individuals) and their requirements. Document internal and external issues that could affect the AIMS. This is where most organizations scope too broadly—don't try to certify every AI tool in the company. Start with the AI systems that carry the most risk or generate the most revenue.

A practical scoping exercise for a 50-person company typically yields 3–8 material AI systems. Customer-facing chatbots, internal recommendation engines, automated fraud detection, AI-assisted hiring tools, and predictive analytics models are common candidates. Shadow AI—tools that employees adopted without formal approval—must be inventoried too. If it touches customer data or makes decisions that affect people, it's in scope until you've justified excluding it.

Clause 5 — Leadership

Top management must demonstrate commitment. That means an AI Policy (Clause 5.2) signed by someone with actual authority, not a middle manager. Roles and responsibilities for the AIMS must be formally assigned. The AI Policy sets the tone—it covers responsible AI principles, compliance obligations, risk appetite, and the organization's commitment to continual improvement. If you've got an ISO 27001 information security policy, the AI Policy sits alongside it (separate document, not an addendum).

Clause 6 — Planning

This is the heaviest clause. It requires your AI risk assessment methodology (6.1.1–6.1.2), risk treatment plan (6.1.3), Statement of Applicability listing all Annex A controls with justifications (6.1.3), and AI system impact assessments for each system in scope (6.1.4). You'll also set measurable AI objectives (6.2). The impact assessment is unique to ISO 42001—it doesn't exist in ISO 27001—and it evaluates the potential impact of your AI systems on individuals, groups, and society. Auditors check this carefully.

The risk assessment must cover AI-specific risk categories that wouldn't appear in a standard information security risk register: algorithmic bias and discrimination, training data quality and representativeness, model drift and degradation, transparency and explainability failures, and unintended consequences of automated decision-making. Each risk needs a likelihood rating, an impact rating, and a documented treatment decision—accept, mitigate, transfer, or avoid. Your SoA then maps the treatment decisions to specific Annex A controls.

Clause 7 — Support

Resources, competence, awareness, communication, and documented information. The competence requirement (7.2) catches organizations off guard—you need training records that prove your staff understand AI-specific risks, not just generic information security training. If you're using AI in production, someone in the organization needs demonstrable competence in AI governance, and the records need to prove it.

Clause 8 — Operation

Operational planning and control (8.1), executing risk assessments during the AI lifecycle (8.2–8.3), and running impact assessments for new or changed systems (8.4). This is where the management system meets daily operations. Every time you deploy a new model, change an existing one, or integrate a third-party AI service, the operational procedures kick in. Evidence here means timestamped records showing you actually ran the assessments before deployment, not retrospective documentation.

Clauses 9–10 — Evaluation and Improvement

Monitoring and measurement (9.1), internal audit (9.2), management review (9.3), nonconformity and corrective action (10.2). These are largely identical to ISO 27001's evaluation clauses. If you've got an existing internal audit programme and management review process, you'll adapt them to include AI-specific criteria. The key addition: your monitoring must cover AI system performance, bias indicators, and risk treatment effectiveness—not just compliance metrics.

| Clause | Required Document | Typical SME Evidence |

|---|---|---|

| 4.3 | AIMS Scope Statement | 1–2 page document defining AI systems, boundaries, and applicability |

| 5.2 | AI Policy | Board-signed policy covering responsible AI principles and commitments |

| 6.1.1–6.1.2 | Risk assessment methodology + results | AI risk register with likelihood, impact, and treatment decisions |

| 6.1.3 | Statement of Applicability | Spreadsheet listing all Annex A controls with applicability justifications |

| 6.1.3 | Risk treatment plan | Action items mapped to risks with owners, timelines, and status |

| 6.1.4 | AI system impact assessment | Per-system assessment of impact on individuals, groups, and society |

| 6.2 | AI objectives | Measurable targets with monitoring indicators |

| 7.2 | Competence records | Training logs showing AI-specific knowledge (not just generic security) |

| 8.1–8.4 | Operational procedures + records | Documented workflows for AI deployment, change, and lifecycle events |

| 9.2 | Internal audit programme + reports | Audit plan, execution records, findings, and closure evidence |

| 9.3 | Management review records | Meeting minutes with inputs, decisions, and action items |

| 10.2 | Nonconformity + corrective action log | Register with root cause analysis, corrections, and effectiveness verification |

Mandatory Documentation Checklist

Every "shall retain documented information" requirement in the standard translates to something an auditor will ask to see. Here's the full list, with an indicator of what you can reuse from ISO 27001 and what needs to be built from scratch.

| Clause | Document | ISO 27001 Reuse? | Notes |

|---|---|---|---|

| 4.3 | AIMS Scope | Partial | Adapt ISMS scope to add AI systems and AI-specific boundaries |

| 5.2 | AI Policy | New | Separate document from information security policy |

| 5.3 | Roles and responsibilities | Partial | Add AI-specific roles (AI governance lead, data steward) |

| 6.1 | Risk assessment methodology | Partial | Extend to cover AI-specific risk criteria (bias, fairness, transparency) |

| 6.1.3 | Statement of Applicability | New | New Annex A controls; cannot reuse ISO 27001 SoA |

| 6.1.3 | Risk treatment plan | Partial | Extend existing plan with AI risk treatments |

| 6.1.4 | AI system impact assessments | New | Unique to ISO 42001; no ISO 27001 equivalent |

| 6.2 | AI objectives | New | AI-specific measurable objectives |

| 7.2 | Competence records | Partial | Add AI-specific competence evidence to existing training records |

| 7.5 | Document control procedure | Yes | Direct reuse from ISO 27001 |

| 8.1 | Operational procedures | Partial | Add AI lifecycle procedures to existing operational controls |

| 9.1 | Monitoring and measurement | Partial | Add AI performance and bias metrics to existing monitoring |

| 9.2 | Internal audit programme | Yes | Extend audit scope to include AIMS; reuse procedure |

| 9.3 | Management review | Yes | Add AI-specific inputs to existing review agenda |

| 10.2 | Corrective action procedure | Yes | Direct reuse from ISO 27001 |

Organizations with no existing ISO certification should expect to create all 15 documents from scratch. That's the bulk of the implementation effort in months 1–4.

Annex A Controls — Scoping Your Statement of Applicability

Annex A contains nine control domains (A.2 through A.10) covering the full spectrum of AI governance. Unlike the main clauses, Annex A uses "should" language—but that doesn't make it optional. Every control must appear in your Statement of Applicability, and every exclusion must be justified with a documented risk rationale.

For an SME implementing ISO 42001 for the first time, prioritize these five domains:

- A.2 — AI Policies: The foundation. Your AI policy from Clause 5.2 lands here, plus any supporting topic-specific policies.

- A.3 — Internal Organization: Roles, responsibilities, and governance structure for AI. Who owns AI risk? Who approves new deployments?

- A.5 — Impact Assessment (A.5.2): This is where the AI system impact assessment lives. Auditors treat this as a flagship requirement—missing it is a major nonconformity.

- A.7 — Data Governance: Data quality, provenance, bias detection in training data. Critical for any organization using machine learning.

- A.9 — Use of AI Systems: Human oversight (A.9.2) and responsible use. This is the Annex A anchor for agent governance, though it wasn't designed with agents in mind.

The most documentation-intensive domain is A.6 (AI System Lifecycle), which covers the entire development and deployment pipeline from initial design through retirement. For SMEs using third-party AI models rather than building their own, A.6 still applies—it covers how you integrate, test, validate, deploy, monitor, and eventually decommission AI systems regardless of who built the underlying model. A.10 (Third-Party and Customer Relationships) is critical if you're using vendor AI APIs, embedding third-party models, or providing AI-powered services to customers. Neither of these can be excluded without strong justification.

A.8 (Information for Interested Parties) deserves attention because it drives your transparency obligations. If your AI system makes decisions that affect customers, employees, or the public, A.8 requires that you provide appropriate information about how the system works, what data it uses, and how decisions can be challenged. This control is the ISO 42001 anchor for responsible AI communication and aligns directly with consumer disclosure requirements in regulations like the Colorado AI Act and EU AI Act.

Building your SoA? The AI Controls Starter includes a pre-built Statement of Applicability template with all Annex A controls, justification fields, and implementation status tracking.

ISO 42001 vs ISO 27001 — Shared Foundation vs New Requirements

If you've implemented ISO 27001, you've already built the infrastructure. The question is how much transfers and what's genuinely new. This table gives you the answer dimension by dimension.

| Dimension | ISO 27001 | ISO 42001 | Reuse? |

|---|---|---|---|

| Scope | Information assets, systems, processes | AI systems specifically, including third-party AI | Partial |

| Policy | Information security policy | AI Policy (separate document, different content) | New |

| Risk assessment | Information security risk methodology | AI-specific risk criteria (bias, fairness, safety, transparency) | Partial |

| Impact assessment | Not required | Mandatory AI system impact assessment (6.1.4) | New |

| Annex A controls | 93 information security controls | ~38 AI-specific controls across 9 domains (A.2–A.10) | New |

| Lifecycle management | Not addressed (asset lifecycle only) | Full AI system lifecycle (A.6): design, development, deployment, monitoring, retirement | New |

| Human oversight | Not addressed | A.9.2: human oversight requirements for AI decisions | New |

| Supplier management | A.15: supplier relationships | A.10: third-party AI providers with AI-specific due diligence | Partial |

| Internal audit | ISO 19011 audit programme | Same process, add AI-specific audit criteria | Yes |

| Management review | Annual review with defined inputs | Same process, add AI-specific inputs (bias, impact, lifecycle) | Yes |

Bottom line: the management system infrastructure (Clauses 4–10 framework) transfers almost entirely. The AI-specific content (policy, risk criteria, impact assessment, Annex A controls, lifecycle) must be built from scratch. That's where the implementation effort concentrates.

Common Implementation Mistakes and Audit Nonconformities

These mistakes show up repeatedly in first-time ISO 42001 implementations. Each one is a real audit finding that certification bodies have reported.

- Scoping too broadly. Attempting to certify every AI tool in the company instead of focusing on material AI systems. Start narrow, expand later. An overly broad scope creates evidence obligations you can't sustain.

- Treating the SoA as a control checklist. The Statement of Applicability isn't a tick-box exercise. Every applicable control needs implementation evidence. Every excluded control needs a documented risk rationale. "Not relevant" isn't a justification—explain why.

- Missing AI system impact assessments. Clause 6.1.4 is unique to ISO 42001 and auditors flag it as a major nonconformity when it's absent. Every AI system in scope needs a documented impact assessment covering effects on individuals, groups, and society.

- No AI-specific competence evidence. Clause 7.2 requires proof that staff have AI-relevant competence. Generic security awareness training doesn't count. Training records need to show specific AI governance, ethics, and risk management content.

- Ignoring third-party AI suppliers. If you're consuming AI APIs (OpenAI, Anthropic, Google) or embedding vendor models, A.10 applies. You need contracts, due diligence records, and ongoing supplier monitoring. This is where many SaaS-dependent SMEs get caught.

- Copying ISO 27001 text without adaptation. Auditors can tell when you've pasted your ISMS documentation and replaced "information security" with "AI." The AI-specific content needs to be genuine—different risks, different controls, different impact language.

- Skipping top management commitment. Clause 5.1 requires demonstrable leadership engagement. If the CEO can't articulate the AI Policy during the audit, that's a finding. Management review minutes must show leadership actually discussing AI governance topics.

Auditor pattern: Certification bodies report that the three most common major nonconformities are: (1) missing or incomplete AI system impact assessment (6.1.4), (2) SoA exclusions without risk justification (6.1.3), and (3) no AI-specific competence records (7.2). Address these three first.

Implementation Timeline — Month by Month

This timeline is calibrated for a 50-person tech company implementing ISO 42001 from scratch. Organizations with existing ISO 27001 can compress this to 3–6 months by reusing infrastructure documentation.

Months 1–2: Foundation. Conduct a gap analysis against all clauses and Annex A controls. Define the AIMS scope (4.3). Identify interested parties and their requirements (4.2). Build the context of the organization (4.1). Create the AI inventory—list every AI system in scope with its purpose, data inputs, outputs, and responsible owner. Draft the AI Policy (5.2). Assign AIMS roles and responsibilities (5.3).

Months 3–4: Risk and Planning. Establish the AI risk assessment methodology (6.1.1). Conduct risk assessments for all in-scope AI systems (6.1.2). Run AI system impact assessments (6.1.4). Draft the Statement of Applicability (6.1.3). Set measurable AI objectives (6.2). Design the competency framework and begin AI-specific training (7.2). This phase produces the most documents and takes the most intellectual effort.

Months 5–6: Operational Implementation. Implement Annex A controls across all applicable domains. Build operational procedures for AI deployment, change management, and lifecycle events (8.1). Start collecting evidence—timestamped records of risk assessments before deployment, approval workflows, monitoring dashboards. Establish the monitoring and measurement programme (9.1).

Months 7–8: Assurance. Execute the internal audit programme (9.2) covering every clause and applicable Annex A control. Document findings, classify nonconformities, and implement corrective actions (10.2). Conduct management review (9.3) with all required inputs. This phase is about proving the system works—not just that the documents exist.

Month 9: Certification. Stage 1 audit: documentation review. The auditor checks that all required documented information exists and the management system is designed correctly. Stage 2 audit: implementation verification. The auditor interviews staff, reviews evidence, and confirms the system is operating as documented. If no major nonconformities, you receive certification. Minor findings get a corrective action timeline.

A practical tip: schedule at least two weeks between Stage 1 and Stage 2. Stage 1 findings are common—missing document references, inconsistent terminology, gaps between the SoA and your actual evidence. You'll want time to clean these up before the implementation audit. Most certification bodies allow a 4–12 week window between stages.

After certification, the cycle continues. Surveillance audits happen annually (covering a sample of clauses and controls), and recertification occurs every three years with a full audit. The management system isn't a one-time project—it's a permanent operational function. Budget for ongoing monitoring, evidence collection, and continuous improvement from day one.

Costs, Audit Path, and Regulatory Benefits

Nobody publishes a definitive price list, but here's what the market data suggests for a 50–200 person company as of early 2026. These are estimates—get quotes directly from certification bodies.

- Certification audit (Stage 1 + Stage 2): $8,000–$25,000, depending on scope complexity, number of AI systems, and which certification body you use (BSI, LRQA, SGS, Schellman, and others).

- Annual surveillance audits: $3,000–$12,000 per year for the two surveillance visits between certification cycles.

- Internal resource cost: 200–400 person-hours over 9 months. Primarily the AIMS lead, risk owner, and AI system owners.

- External consultant (optional): $15,000–$50,000 for implementation support. Many SMEs handle this internally using structured toolkits.

The regulatory benefits of certification are increasingly concrete. The Colorado AI Act (SB 24-205, effective June 30, 2026) explicitly names ISO 42001 compliance as an affirmative defense for developers and deployers of high-risk AI systems. If you can demonstrate a certified AIMS covering your high-risk systems, you've got a statutory affirmative-defense pathway against enforcement actions. The EU AI Act's harmonized standards programme is also developing alignment between ISO 42001 and the EU's conformity assessment requirements, though the mapping isn't finalized as of March 2026.

Beyond regulatory defense, certification signals operational maturity to enterprise customers. If you're an SME selling AI-powered products to banks, insurers, or government agencies, a certified AIMS gives procurement teams a concrete compliance artifact to check off. It doesn't eliminate their due diligence requirements, but it compresses the evaluation timeline significantly. Some market participants report shorter enterprise sales cycles after ISO 42001 certification, but this should be treated as anecdotal unless independently verified.

One strategic consideration: if you're deploying AI agents (autonomous systems that plan, act, and invoke tools), ISO 42001 Annex A provides partial coverage but doesn't address agent-specific risks like bounded autonomy, inter-agent trust, or cascading failures. You'll need supplementary controls from frameworks like the IMDA Agentic AI Governance Framework or OWASP Top 10 for Agentic Applications for 2026. See our agentic AI governance guide for the full framework comparison.

Ready to start? The AI Controls Starter ($399) includes the unified controls matrix, gap analysis checklist, and risk register template. The AI Controls Professional ($1,299) adds full ISO 42001 policy templates, SoA template, impact assessment templates, and a 6-month implementation project plan.

Compare AI Controls Starter and AI Controls Professional →