Why Singapore Built a Purpose-Built Agentic Framework

Singapore's Infocomm Media Development Authority (IMDA) released the Model AI Governance Framework for Agentic AI (MGF) on January 22, 2026 at the World Economic Forum in Davos. It isn't the first AI governance framework Singapore has produced - earlier versions covered traditional AI (2019), an update (2020), and generative AI (2024). But this one addresses a fundamentally different problem.

Existing frameworks like ISO 42001 and NIST AI RMF were designed for systems that receive a prompt and return a response. Agentic AI systems don't work that way. They plan multi-step actions, invoke external tools, delegate to sub-agents, and take real-world actions with consequences that can't always be undone. The governance assumptions underpinning traditional frameworks - a human reviews every output, the system operates within a single session, actions are reversible - break down when agents operate autonomously.

IMDA's framework is voluntary and non-binding. It carries no statutory penalties in Singapore or anywhere else. It can still be useful as a reference model for board-level risk documentation and internal agentic AI governance design where the four-dimension model fits the use case.

Singapore's approach builds on a decade of practical AI governance work. The 2019 Model Framework was one of the first national-level AI governance documents globally. The 2020 update added sector-specific implementation guidance. The 2024 generative AI supplement addressed foundation models and prompt-based systems. The 2026 MGF for Agentic AI represents the logical next step: governance for systems that act, not just respond.

See how all four frameworks compare in the complete agentic governance guide.

- Agentic AI

- AI systems that autonomously plan, execute multi-step tasks, invoke tools, and take actions with real-world consequences.

- MGF

- Model AI Governance Framework - IMDA's voluntary governance guidance for AI systems in Singapore.

- Meaningful Human Accountability

- IMDA's term for requiring an identifiable human responsible for each agent's outcomes - broader than real-time oversight.

- Operational Bounds

- Explicitly defined limits on what an agent is authorized to do, covering tool access, data scope, financial thresholds, and decision types.

Dimension 1: Assess and Bound Risks Upfront

Before any agent goes into production, the organization must scope what it's allowed to do and identify what could go wrong. This isn't a generic risk assessment - it's agent-specific, covering the agent's autonomy level, tool access, data reach, and potential for cascading actions.

Most organizations deploying agents in 2026 skip this step entirely. They treat agent deployment like deploying a chatbot - configure it, test it briefly, push it to production. IMDA's framework makes the case that agents require pre-deployment risk scoping at a level of specificity that traditional AI governance doesn't demand. An agent that can invoke APIs, access databases, and delegate to sub-agents introduces failure modes that a static prediction model simply doesn't have.

Key Requirements

- Agent inventory: Catalogue every AI agent in the organization - what it does, what tools it accesses, what data it can read or write, and what autonomy level it operates at. Include agents embedded in third-party SaaS products; those are still your governance responsibility.

- Risk identification: Map failure modes per agent: goal misalignment, tool misuse, data leakage, cascading sub-agent failures, and unauthorized real-world actions. Consider both individual agent failures and inter-agent cascade scenarios.

- Operational bounds: Define explicit limits on what each agent is authorized to do. Bounds should cover tool access (whitelist only), financial thresholds, data scope, temporal limits, and decision types requiring human approval.

- Proportionality: Controls must scale with risk. A scheduling assistant doesn't need the same containment as an agent that executes financial transactions. Document your proportionality rationale - auditors will ask for it.

Evidence required: Agent inventory register, per-agent risk assessment, operational bounds specification, proportionality justification.

Dimension 2: Make Humans Meaningfully Accountable

"Meaningful human accountability" is the phrase IMDA uses deliberately. It's not the same as human-in-the-loop oversight (which implies real-time approval of every action). It's broader: someone specific must be accountable for every agent's behavior, decisions, and outcomes - and that accountability can't be delegated to the agent itself.

This distinction matters. Traditional HITL governance assumes a human reviews each decision before the system acts. That model doesn't scale to agents that make dozens of decisions per minute across multiple tool invocations. IMDA's approach is more realistic: you don't need a human approving every action, but you absolutely need a human who will answer for the consequences. The accountability structure exists whether or not the human was actively monitoring at the moment something went wrong.

In my experience reviewing ISO 42001 implementations, organizations frequently confuse "someone is watching" with "someone is accountable." Watching dashboards is monitoring. Accountability means you've documented who made the deployment decision, who set the operational bounds, who approved the tool access list, and who gets called at 2 AM when the agent does something unexpected.

Key Requirements

- Named accountability: Every agent must have an identifiable human (or role) responsible for its behavior. "The system is accountable" is not permitted under this framework.

- Escalation thresholds: Define specific conditions under which an agent must pause and escalate to a human. Thresholds should be measurable and auditable, not subjective.

- Override capability: Humans must be able to override agent decisions and halt agent execution at any point. This requires both technical implementation and documented procedures.

- Redress procedures: When an agent's action causes harm to a third party, there must be a clear path for the affected party to seek redress from a human - not from the agent.

Evidence required: RACI matrix per agent, escalation threshold specification, override procedure documentation, redress contact and process.

Dimension 3: Implement Technical Controls and Processes

Governance documentation without technical enforcement is paperwork, not protection. Dimension 3 covers the full agent lifecycle - from design through post-deployment monitoring - with specific controls at each stage. This is where IMDA's framework most closely aligns with ISO 42001 Annex A.6, but it adds agentic-specific requirements that the standard doesn't address.

The critical insight in Dimension 3: agentic systems require controls at four distinct lifecycle stages, and organizations typically only implement post-deployment monitoring - skipping the design, development, and pre-deployment gates that prevent problems rather than detect them after the fact.

Lifecycle Controls

- Design stage: Agent goal specification, tool whitelist with least-privilege access, boundary enforcement rules, harm pathway identification.

- Development stage: Code review with agentic-specific checklist, bias and fairness testing on agent decisions, robustness testing against adversarial inputs.

- Pre-deployment: Red-teaming against goal hijack, tool abuse, and cascading failure scenarios. Stakeholder feedback. Go/no-go decision gate.

- Post-deployment: Continuous monitoring of agent actions, anomaly detection, incident response procedures, and quarterly re-assessment of risk bounds.

Evidence required: Design documentation, code review records, red-team reports, monitoring dashboards, incident logs, re-assessment records.

Dimension 4: Enable End-User Responsibility

End users - whether customers, employees, or business partners interacting with agents - must know they're dealing with an autonomous system, understand what it can and can't do, and have a clear way to escalate to a human when needed.

Dimension 4 is where the IMDA framework intersects with the EU AI Act's transparency requirements under Article 50. While the EU mandates disclosure for certain AI systems, IMDA takes a broader approach: all agent interactions should include disclosure, regardless of risk classification. The reasoning is straightforward - users can't make informed decisions about reliance and trust if they don't know an agent is making or executing decisions on their behalf.

Organizations often treat end-user disclosure as a legal checkbox. IMDA frames it differently: disclosure isn't just about compliance, it's about enabling users to exercise appropriate judgment about when to trust agent outputs and when to override or escalate.

Key Requirements

- Transparent disclosure: Users must be informed when they're interacting with an AI agent, what the agent's capabilities and limitations are, and what decisions the agent is authorized to make.

- User training: Employees who work alongside agents need training on agent behavior, appropriate reliance, and when to escalate or override.

- Escalation access: Clear, accessible path to reach a human - not buried in a support queue. Users need to know who to contact and how quickly they'll get a response.

- Feedback mechanisms: A channel for users to report unexpected agent behavior, with documented procedures for how reports are triaged and resolved.

Evidence required: User disclosure materials, training completion records, escalation contact list, feedback form and triage logs.

Implementation Checklist

The table below maps each dimension to its key requirements, the governance artifacts you'll need to produce, and who in the organization is typically responsible.

| Dimension | Key Requirement | Governance Artifact | Responsible |

|---|---|---|---|

| 1. Assess & Bound Risks | Catalogue all agents | Agent inventory register | AI Governance Lead |

| Map failure modes per agent | Agent risk assessment | Risk Manager | |

| Define operational bounds | Bounds specification per agent | Product Owner + Risk | |

| Justify proportionality | Proportionality assessment | AI Governance Lead | |

| 2. Human Accountability | Assign named accountability | RACI matrix per agent | CISO / DPO |

| Set escalation thresholds | Escalation threshold spec | Product Owner | |

| Enable human override | Override procedure + technical kill switch | Engineering Lead | |

| Establish redress path | Redress procedure for affected parties | Legal / Compliance | |

| 3. Technical Controls | Design-stage controls | Agent design doc + tool whitelist | Engineering Lead |

| Pre-deployment testing | Red-team report + go/no-go record | Security Team | |

| Post-deployment monitoring | Monitoring dashboard + alert rules | DevOps / SRE | |

| Periodic re-assessment | Quarterly risk review records | AI Governance Lead | |

| 4. End-User Responsibility | Disclose agent status | User disclosure materials | Product Owner |

| Train internal users | Training program + completion log | HR / L&D | |

| Provide feedback channel | Feedback form + triage procedure | Customer Support |

Mapping IMDA to ISO 42001 and NIST AI RMF

The IMDA framework doesn't exist in isolation. Organizations already implementing ISO 42001 or following NIST AI RMF can map IMDA's four dimensions onto their existing control structures. The table below shows where each dimension aligns - and where IMDA extends beyond what the standards cover.

| IMDA Dimension | Key Requirements | ISO 42001 | NIST AI RMF | Implementation Actions |

|---|---|---|---|---|

| 1. Assess & Bound Risks | Agent inventory, risk identification, operational bounds, proportionality | Clause 6.1, A.4.3, A.5 | MAP 1.1, MAP 2.2, MAP 3.1 | Create agent register; document bounds per agent; justify risk-control proportionality |

| 2. Human Accountability | Named accountability, escalation thresholds, override capability, redress | Clause 5.3, A.9.2 | GV.1.3, GV.2.1 | Build RACI matrix; define measurable escalation triggers; test override mechanisms |

| 3. Technical Controls | Design-stage controls, pre-deployment testing, monitoring, re-assessment | A.6 (full lifecycle) | MEASURE 2.2, MEASURE 3.3, MANAGE 2.1 | Implement tool whitelists; run agentic red-teams; deploy anomaly monitoring |

| 4. End-User Responsibility | Disclosure, training, escalation access, feedback | A.8 (communication), A.9.1 | GV.4.2, MAP 5.1 | Draft disclosure notices; build training module; create feedback triage process |

See the full ISO 42001 implementation guide for base-layer controls.

IMDA vs UC Berkeley vs NIST AI RMF

Three frameworks. Different origins, different scopes, overlapping territory. The table below compares them across seven dimensions to help you decide which to implement - or, more realistically, how to layer them together.

| Dimension | IMDA MGF | UC Berkeley CLTC | NIST AI RMF |

|---|---|---|---|

| Primary Focus | Operational governance | Technical risk management | Risk management framework |

| Agentic-Specific | Yes (purpose-built) | Yes (extends NIST) | No (general AI) |

| Certification | No certification path | No certification path | No (voluntary profile) |

| Key Concept | Meaningful human accountability | Bounded autonomy + defense-in-depth | Govern/Map/Measure/Manage |

| Multi-Agent | Limited coverage | Addresses delegation chains | Not addressed |

| Human Oversight | Accountability-based (not just real-time) | Autonomy spectrum (L0–L5) | Human-in-the-loop |

| Implementation Detail | Moderate (artifact-level) | Low (academic, conceptual) | High (subcategory-level) |

The practical recommendation for SMEs: implement NIST AI RMF as the base layer, use IMDA's four dimensions for agentic governance structure, and apply UC Berkeley's bounded autonomy and defense-in-depth as technical design principles. No single framework is sufficient on its own - but layering all three gives you coverage across governance, risk management, and technical controls that no individual framework provides. Organizations already operating an ISO 42001-aligned AIMS may have a useful starting point, but they still need a separate mapping exercise before relying on that alignment for agentic AI governance.

Practical Implementation Steps for SMEs

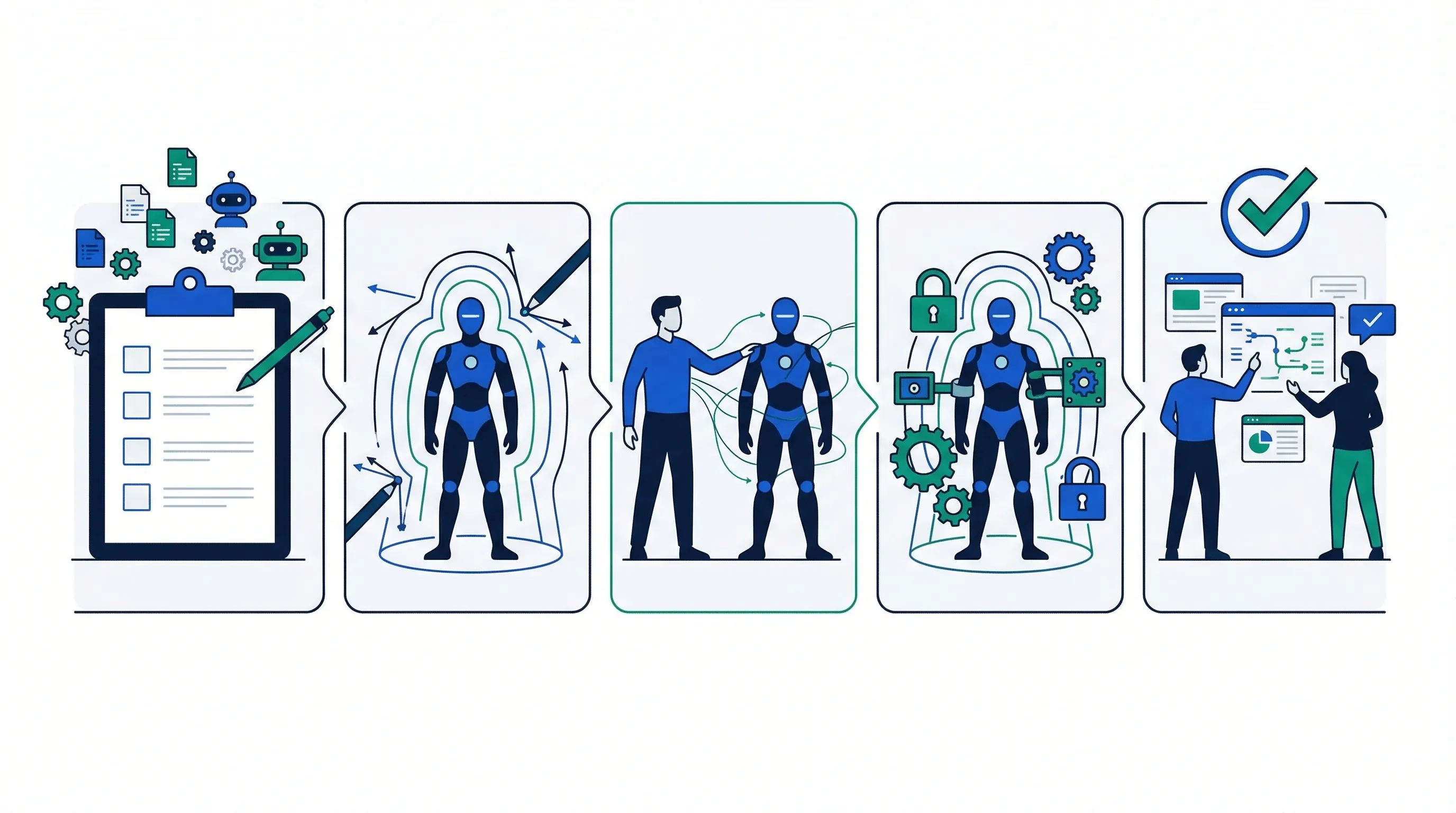

IMDA's framework reads well as policy. Turning it into operational practice requires a concrete five-step process. Most SMEs deploying between 3 and 10 agents can work through this in 4 to 6 weeks if they assign a dedicated governance owner.

Step-by-Step

Step 1: Inventory all agents and classify autonomy levels. You can't govern what you can't see. Catalogue every AI agent in the organization - including agents embedded in third-party SaaS tools that your teams may have activated without IT approval. Classify each by autonomy level (manual, semi-autonomous, fully autonomous) and by risk tier (low, medium, high). The inventory becomes the foundation for every subsequent governance decision.

Step 2: Define operational bounds per agent. For each agent, document what it's authorized to do, what tools and data it can access, what financial limits apply, and what decision types require human approval. Bounds should be specific enough to test and audit - "the agent handles customer queries" isn't a bound; "the agent can access the FAQ database (read-only), respond to Tier 1 support tickets, and escalate any refund request over $100 to a human" is.

Step 3: Assign accountable humans for every agent. Build a RACI matrix that names specific individuals (not departments) responsible for each agent's behavior. "The system is accountable" is not acceptable under this framework - someone with a name and a job title must own it. For each agent, identify who approved deployment, who monitors ongoing behavior, who gets called for escalations, and who answers to regulators.

Step 4: Implement technical controls. Translate governance policies into enforceable technical controls - tool whitelists with least-privilege access, API rate limits, comprehensive logging of all agent actions (including intermediate reasoning steps), anomaly detection thresholds, and circuit breakers that halt execution when bounds are breached. Policy without enforcement is hope, not governance.

Step 5: Document everything and train staff. Governance that exists only in someone's head isn't governance. Document agent specifications, risk assessments, accountability assignments, monitoring configurations, and escalation procedures. Train staff who interact with agents on appropriate reliance, override procedures, and escalation contacts. Keep documentation in a central repository that auditors can access without chasing people.

Common Mistakes to Avoid

Organizations implementing IMDA's framework consistently make the same errors. Seven of the most common:

- Confusing accountability with monitoring: Having dashboards is observation. Accountability means a named individual owns the consequences, whether or not they were watching the screen when the incident occurred.

- Interpreting "bounded autonomy" as purely technical: Bounds require both policy documentation (what the agent is allowed to do) and technical enforcement (controls that actually prevent out-of-bounds behavior). One without the other is insufficient.

- Treating Dimension 2 as an HR exercise: Meaningful accountability is a governance and legal structure, not a staffing decision. It's about RACI matrices and escalation contracts, not headcount.

- Skipping Dimension 4 end-user disclosure: Users need to know they're interacting with an agent. This applies to internal employees using agent-assisted tools, not just external customers.

- Assuming IMDA applies only to Singapore: The framework is voluntary outside Singapore. Teams can still use it as a reference model where it fits their risk profile and governance maturity.

- Not updating bounds as agents evolve: An agent that gained new tool access or expanded data scope last sprint still has governance bounds from last quarter. Governance must keep pace with agent capability changes.

- Failing to document autonomy bounds in an auditable format: If an external auditor or regulator asks how your agents are governed, you need to produce documentation within hours, not weeks.

Compare toolkit tiers to find the right level for your organization.